Introduction

Artificial Intelligence (AI) is transforming qualitative research as its role shifts from being a tool to a teammate. Previously used primarily to automate transcription or provide preliminary coding, AI is now beginning to be recognized as an intelligent collaborator capable of shaping analysis alongside human researchers. This evolution raises a pressing question: What new forms of human-AI interaction might emerge if we embrace AI not just as an assistant, but as a genuine member of the qualitative research team?

The reflections in this paper come from Research and Development (R&D) efforts and client projects at Stby, a design research agency based in London and Amsterdam. Operating in an environment that actively champions innovation and positive engagement with AI, the team has collectively embraced a culture of iterative learning and experimentation with hybrid-intelligence collaboration.

Stby uses general purpose AI, namely NotebookLM, Gemini, and ChatGPT, and does not focus efforts on using AI-enabled tools specifically designed for the qualitative research industry (e.g. NVivo, MAXQDA, Delve, ATLAS.ti, Looppanel). This is due to the practicality, accessibility, and ease of collaboration with general-purpose AI. NotebookLM and Gemini are included in Stby’s Google Workspace, ensuring company data stays private and is neither used to train AI models nor reviewed by external people. NotebookLM securely generates answers to queries based on user-uploaded files; referencing only those sources makes hallucinations rare and keeps data fully under user control. For ChatGPT, Stby uses both paid and free individual accounts. Company and research data files are never provided to ChatGPT, as the account privacy policy does not meet Stby’s requirements for handling such information.

This paper shares empirical insights from six distinct case studies that illuminate how AI functions across various tasks: from processing multi-media data and identifying emergent themes, to refining insights for narrative building and collecting relevant evidence. The findings extend beyond individual efficiency gains, delving into the complex interplay between human and AI intelligence within a team context. The co-authors also examined the impact of AI on team dynamics, collaborative relationships, and the evolving roles of researchers. This paper aims to encourage research peers within the community to expand their perceptions of AI, to think beyond AI as a utilitarian instrument and explore its capacity as a collaborator in intellectual inquiry.

Literature Review

The Emergence of AI in Qualitative Research

AI is rapidly reshaping the landscape of qualitative research. Many AIs promise increased efficiency and the ability to handle large datasets, accelerating interpretation and identifying patterns that may be missed manually. An emerging body of literature is shifting from focusing on the technical capabilities of AI as an augmenting tool towards understanding how AI impacts human thinking process (Lee et al. 2025) (Kosmyna et al. 2025), teamwork (Shaikh and Cruz 2022), the nature of human-AI collaboration (Hibolling et al. 2024), and its ethical implications in the context of qualitative research.

Qualitative researchers exploring AI as a research ‘collaborator’ have suggested various ways to see it as a ‘cultural technology’, combining the machine’s processing power with human intuition (Haouam 2025, 102), and intentionally embracing its capacity to surprise us as a kind of ‘robotic artistry’ (Hibolling et al. 2024). This paper embraces this perspective and explores experiences where AI felt like a true collaborator during the data analysis phase of qualitative research, a moment of the research process that is highly interpretive, focused on knowledge creation and relies heavily on human cognition and cultural sensitivity. This offers an exciting opportunity to examine the idea of human-AI collaboration.

Stby’s experiences collaborating with AI align with what is observed in the literature. AI struggles to fully grasp contextual nuances, such as sarcasm, tone, emotional subtleties (Butt et al. 2025) and cultural context (Hibolling et al. 2024) (Haouam 2025). AI’s reliance on patterns in training data means it often misses what language means beyond what it looks like (Hibolling et al. 2024). Additionally, general-purpose AIs, like the ones Stby works with, are not specialized in qualitative sub-fields like visual ethnography (analyzing images and videos), sensory ethnography (interpreting non-textual sensory data), or autoethnography; these applications remain largely understudied (Panke 2025) (Khattri et al. 2025). But AI can assist with other parts of qualitative research analysis such as data pre-processing, initial coding and thematic analysis (Haouam 2025, 85) (Rao and Singh 2025) (Williams 2024). This collaboration, though, does not come without impact on the identity and skills of individual researchers and teams as ‘knowledge creators’.

There is an emerging amount of literature specifically looking at how AI’s involvement impacts knowledge creation. One study on AI-assisted essay writing links the involvement of AI to cognitive debt of the writer, noting that it changed individuals’ brain connectivity, their sense of ownership of the essay and information recall (Kosmyna et al. 2025). Another study with knowledge workers describes a self-reported reduction in critical thinking (Lee et al. 2025). Put into the context of qualitative research, this raises questions about how AI affects researcher’s cognitive processes and their ability to generate reliable themes and interpret patterns that lead to insights.

Integrating AI into qualitative research data analysis also introduces ethical concerns, primarily around data privacy, consent, bias, transparency, and accountability (Williams 2024) (Davison et al. 2024) (Haouam 2025). The European Parliament and the UK Government have recently advanced AI regulations, but how these will affect research practices is still being debated by scholars and practitioners. The co-authors discuss how Stby handles these topics in the discussion.

AI’s Impact on Team Dynamics and Collaborative Relationships

In practice, qualitative research is often conducted by teams, yet most current AIs are designed for individual use with little to no collaborative features. On top of that, complexity is added to human-AI team collaboration when team members have different skill, comfort and trust levels with AI. While AI is shown to improve productivity with individual users (Ju and Aral 2025, 2), how does this translate to teamwork?

Research on how AI integration affects team productivity and performance is growing. Zhou and Gorman (2024) found that communication timing differs between human-AI and all-human teams, but it is the sequencing of communicative messages that most strongly predicts team performance. Shaikh and Cruz (2022) showed that under time pressure, teams using an AI assistant relied on it more but performed worse on creative tasks than teams without one. Another study found that human-AI teams (using a state-of-the-art AI platform and ‘personality prompt’ techniques) achieved significantly higher productivity and generated better ad copy than human-only teams (Ju and Aral 2025, 41); this suggests that optimal performance in AI-human collaboration depends not only on the AI’s capabilities but also on human learning and interaction strategies.

There is still a gap in the literature on understanding how AI affects team dynamics. This includes power dynamics, such as who handles AI-generated content and whose interpretations are prioritized; shared understanding, like how AI supports or challenges collective sense-making; and trust, both among team members and between humans and AI. Although some studies have begun to explore trust in AI individual use (Zhang et al. 2025, 12), its specific impact on team trust and collaboration dynamics remains under-explored.

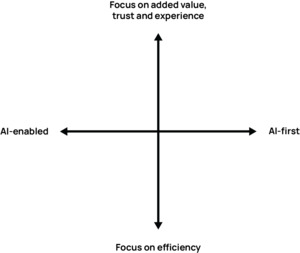

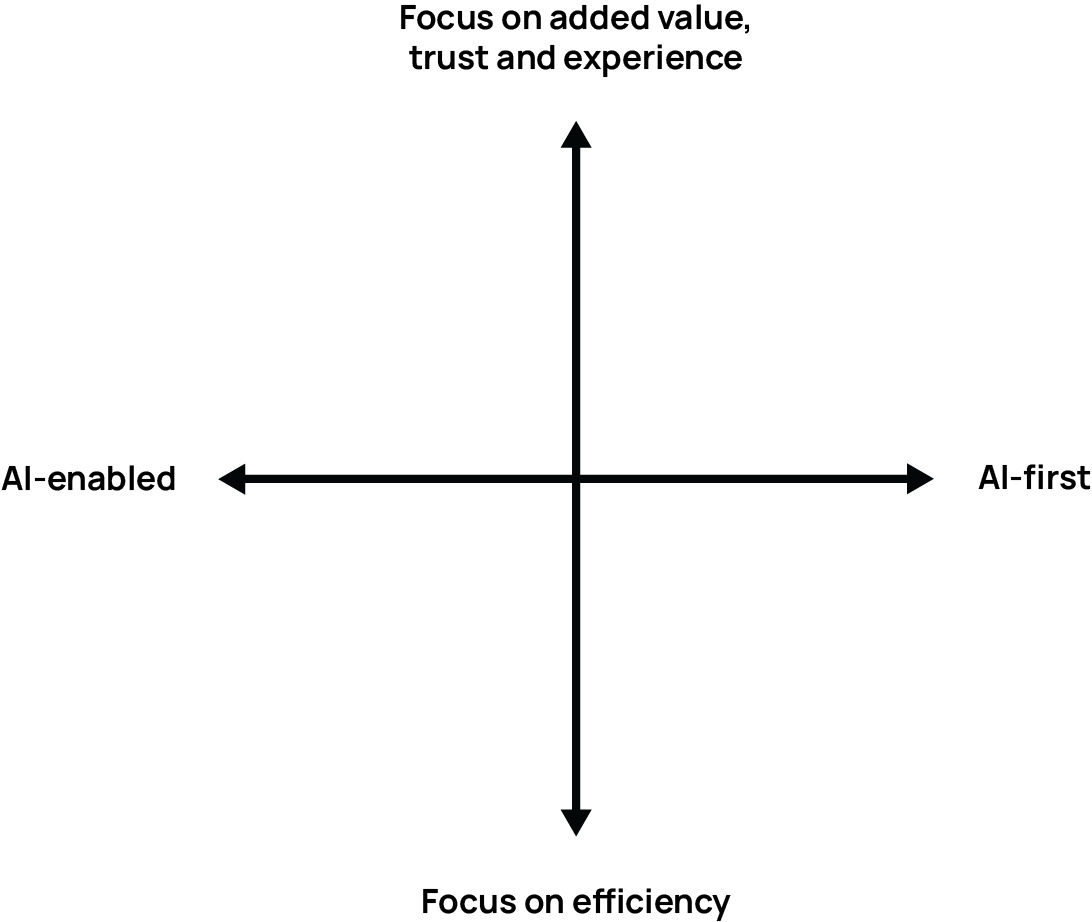

A whitepaper published by Teaming with AI in 2024 offers ideas for how teams can learn and experiment with AI together in order to manage its impact on team collaboration. AI adoption means that a team’s process as well as its experience (individual and collective) will change, requiring colleagues to manage both evolving elements (Hormess and Bailey 2024, 68). One of the frameworks from this whitepaper offers a way for teams to establish where they are in their journey of AI integration (see Figure 1). It categorizes AI processes at work by how it is used (horizontal axis) and what it is used for (vertical axis). Stby’s activities with AI exist in all four quadrants, but the efforts to involve AI as a collaborator sit mostly in the top right corner. Importantly to note, ‘AI-first’ does not mean that AI’s output overweights the researcher’s own analysis work; everyone’s analysis inputs, human or AI, are collectively considered, weighted and integrated into the iterative process that comprises qualitative research analysis.

Key Research Questions

The existing literature clearly points out that AI has a significant impact on individuals and teams in the workplace and that there is growing potential for human-AI collaboration. However significant gaps remain in understanding its involvement as a collaborator in teamwork and its influence on qualitative research experience and output, specifically. In response, this paper presents empirical evidence from qualitative researchers at Stby on this topic. The following research questions are used to guide the inquiry:

-

How can generative AI be involved in qualitative research analysis practices as an intelligent ‘team member’ rather than just an efficiency tool?

-

What roles can generative AI play in a human-AI team during qualitative research analysis?

-

How does the involvement of generative AI alter team dynamics and collaboration during qualitative research analysis?

Methodology

Action Research

Action Research is a practical research method focused on solving problems and improving practices by simultaneously conducting research and taking action, rather than focusing solely on knowledge generation. Originally developed for social research, it has since been adopted in practice-led fields such as design, education, healthcare and social work (Tisdell, Merriam, and Stuckey-Peyrot 2025). The method consists of a cyclical process of planning, acting, observing, and reflecting.

The four co-authors of this paper followed the steps of Action Research to draw reflections from the six case studies outlined in this paper. The participatory nature of this method supports collective sense-making and real-time adjustments, from one case study to the next, while maintaining a rigorous framework for reflection and insight across iterations.

Analysis Framework

The analysis continued sequentially from one case to the next, providing deeper practical learnings on human-AI collaboration over time, with no attempt in theoretical models. Following each project, the research team met to debrief their process, dedicating time to discuss their application and experience with AI. Each team member expressed their individual thoughts and experiences about using AI; common and differing experiences across the team were highlighted. This resulted in reflective documentation of human-AI team collaboration and new action plans to share with other colleagues who were not part of that specific project team, which led to new iterations in subsequent projects. On top of the project specific reflections, the co-authors of this paper conducted three rounds of overarching reflective analysis on AI’s role as a team member across all six case studies to derive further insights.

Case Studies

Introduction

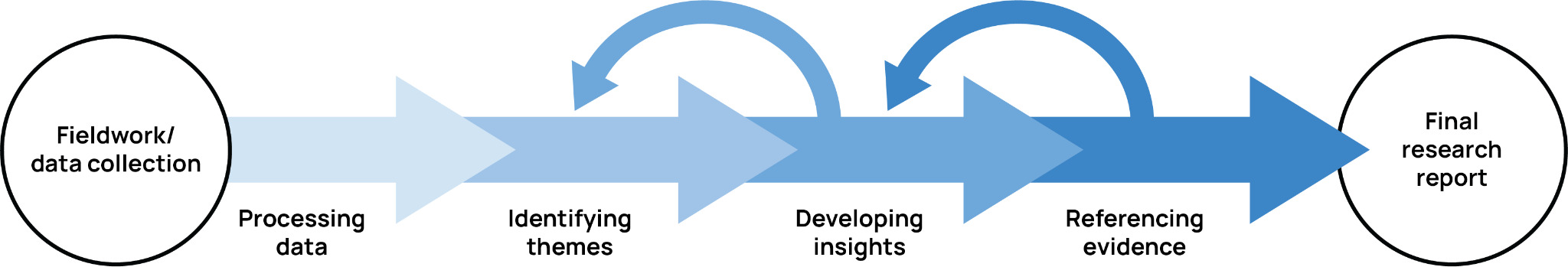

This section outlines learnings from six case studies at Stby where qualitative research teams collaborated with AI during data analysis. The teams followed a common analysis process that involved several rounds of coding, categorization, and clustering, leading to themes and subthemes that then developed into insights or findings (Miles, Huberman, and Saldana 2020). This paper examines the role of AI across four tasks typical in qualitative research analysis: data processing, theme identification, insight development, and evidence referencing. In practice, these tasks generally follow a natural progression, with iterative cycles occurring between some stages (Figure 2).

Case Study Overview

Stby has devoted time and resources through strategic R&D to innovate upon its qualitative research practices. This can take different forms: 1) Spending extra time on client projects to generate learnings about new tools or techniques with real data, and 2) Devoting a stand-alone R&D project to experiment with new tools and techniques where no client data is involved. Within the six case studies, there is a mix of both R&D types. The choice of when to use which AI tool is often limited in the cases of client projects, due to privacy and data protection. However, all case studies were selected to include the following elements:

-

The case used qualitative research methods (in-person or remote). Sometimes other research methods were involved in the wider project, but this paper focuses only on reflections derived from the qualitative research methods.

-

The case includes the use of at least one generative AI, and the AI was involved in analyzing qualitative data.

-

One or more of the co-authors was actively involved in the analysis stage of the project and could observe and reflect on experiences with their human and AI teammates.

Processing Data

Processing data refers to all the steps necessary to prepare the raw data for analysis, which includes getting the data in a workable format for AI to help collaborate. Since data collection and data analysis often run in parallel in qualitative research, this processing task is ongoing and happens alongside fieldwork. Although Stby’s workflow is mostly digital, AI is still not able to independently process data without its human teammates.

In line with the literature, general use AI excels at handling structured text and (transcribed) audio recordings but significantly lacks in analyzing non-text-based-data. The AI used in these projects was unable to pick up on visual and non-verbal cues such as facial expressions, gestures, context and tone, which are often critical in qualitative research work. For example, in the AI Service Design R&D project, the team used the design documentary method during fieldwork, which collected short contextual video clips of participant experiences. Working with AI to analyze the raw video was not possible with NotebookLM because it does not support video file uploads. One member spent considerable time consolidating clips, exporting audio, and transcribing it before AI could be involved, causing delays and frustration. Ultimately, the videos were analyzed manually. If this project were for a client, this time could easily set back the team during fieldwork. To collaborate effectively with AI, teams must consider data formats that minimize processing time based on the preferred AI’s capabilities.

In many cases, human assistance is needed during data processes for privacy and data protection purposes. For the Climate Action client project, the research team recorded and transcribed online interviews, and manually removed names and personal information from the transcripts before giving the documents to NotebookLM. This process took some time, but was overall simple and imperative for keeping participants’ personal details safe. The diary study responses, as part of the AI Teamwork R&D project, did not contain any personal details, so processing the data to collaborate with AI was straightforward as the team was able to place the raw data directly into NotebookLM.

Identifying Themes

To help identify emergent themes across a dataset, AI operates as a diligent and efficient team member who can quickly spot patterns and points of interest. It is able to quickly scan through text and audio inputs, including interview transcripts, diary study responses and team conversations, and generate thematic groupings or even suggest preliminary insights. This is particularly useful when fieldwork was conducted by multiple researchers. In that case, AI becomes the team member who ‘knows’ the full dataset and each researcher can collaborate with it to understand the emerging themes throughout. But, human ownership of the data is critical in AI collaboration during theme identification, as researchers can only effectively verify patterns and themes when they know the content themselves and can assess AI outputs against their firsthand understanding.

In the Clothing Shoppers and Climate Action client projects, NotebookLM was given access to the interview transcripts. It contributed by identifying repeated topics across different participants, highlighting differences, and offering possible lines of inquiry. These emerging themes, while not definitive, helped the team to move through the initial stages of analysis, providing a shared starting point for deeper interpretation in the next step. When time was limited, such as in preparation for client progress meetings, AI’s speed and capacity to hold a wide data view was valuable.

However, AI’s involvement in this phase changed how analysis unfolded within the team. In several cases, AI was engaged by individual researchers rather than as part of a co-analysis activity that involves the entire research team (which was typical of pre-AI times). This sometimes made the process feel isolating and less enjoyable for the researcher, and potentially impacted the intercoder reliability of the insights. For the individual researcher, it’s also more challenging to verify if an AI’s output is accurate, especially if that researcher was only involved in a portion of the fieldwork. AI being involved in this part of the process also raised concerns of replacing work for junior or intern researchers, which are important parts of their training in learning how to handle a dataset and poses a risk to their formative learning experiences. Soon, the team realized that AI’s ability to surface a broader range of themes and patterns could not replace a good conversation amongst the researchers; a combination of the two was found to be the most useful for analysis.

Through experimentation, the team discovered a new collaborative way to bring AI into the team: to include it as a listening ear at the table (or an online meeting) where researchers debrief their thoughts from the fieldwork or after their first round of coding. This was done seamlessly during the AI Service Design R&D project, where the team sat together, opened ChatGPT on a mobile phone and recorded their conversation about their experiences in the field and speculated on what was emerging intuitively. ChatGPT was then able to distill post-fieldwork team reflections into a coherent summary, which helped the team move forward in an aligned direction.

AI’s involvement in identifying themes depends heavily on human oversight, since it lays the foundation for the deeper analysis to come. Human researchers remain critical gatekeepers who correct misreadings, suggest emerging themes, and bring interpretive richness to any findings. Providing AI with additional contextual prompts to guide interpretation helps the collaboration run smoothly. AI’s suggestions are only as good as the framing it receives, because it lacks the nuanced understanding that researchers develop through direct fieldwork such as context and non-verbal cues. The researchers in these projects found the need to consistently articulate project goals to AI to be both helpful and a hindrance because it required them to verbalise their internal processes which sometimes brought clarity but also sometimes pulled them out of their flow.

Developing Insights

AI can play a helpful role in refining insights into more polished outputs ready for reporting. This task follows early analysis iterations, where emergent themes have already been established and the researchers have spent time organizing these insights into final thematic categories. In the client projects Clothing Shoppers, Media Streaming, and Climate Action, ChatGPT stepped in to assist with re-wording insights to make them more concise, structured, and suitable for stakeholder-facing deliverables, often under tight timelines or page constraints.

Though NotebookLM can work with raw data like transcripts, ChatGPT has proven to be more skilled at writing in a creative and concise style. NotebookLM produces thorough outputs and is excellent at building a chain of evidence from a user-defined data source, but its generated text can be lengthy and often lacks the narrative needed to convey the insights effectively. A workflow that Stby found works well is to take the output from NotebookLM with the researcher’s additions or edits and ask ChatGPT to rewrite it to be more suitable for the audiences of the report before handing it back to the researchers for curation and final refinement. At this stage, ChatGPT does not access either fieldwork data or client data.

Interestingly, co-writing with AI is more than grammar correction or shortening a long sentence. In this capacity, AI acts much like a skilled editorial team member who can quickly translate rough or overly detailed analysis into cleaner, more digestible statements or paragraphs. Stby researchers often write reports in pairs, therefore, it’s common to read and rewrite each other’s work back and forth within one report. Adding AI in such a workflow felt as natural as adding a third team member. For instance, in the Clothing Shoppers client project, ChatGPT helped format findings into structured “Jobs to Be Done” statements, streamlining what is typically a time-consuming synthesis process. In the Climate Action client project, researchers worked with ChatGPT to consolidate long lists of findings created by NotebookLM into a clearer and more readable format to share with the client. Meanwhile, in the Media Streaming client project, ChatGPT supported a quick-turnaround report by tightening up the language of early-stage emerging insights generated from multiple researchers who were all involved in fieldwork.

Still, AI are not authors of the report who come up with arguments or insights. Researchers are always driving the process to correct misinterpretations or fill in subtle gaps in meaning, ensuring that the final statements maintain their nuance and accurately reflect the fieldwork findings. When well guided, AI can add value through speed and linguistic refinement, complementing the researcher’s interpretive judgement.

Referencing Evidence

Qualitative data analysis shifts between inductive and deductive modes, thus, it’s essential for researchers to be able to reference evidence from data quickly and accurately. Across the Digital Navigation and Media Streaming client projects, AI played a helpful role in assisting the research team in referencing relevant quotes, examples, and stories from large datasets. Traditional qualitative data software (e.g. MaxQDA) also have the ability to do this, but AI like NotebookLM can locate supportive data without extensive tagging upfront, which saves researchers time and energy. Many of the traditional qualitative data software now claim to offer similar AI features for referencing, but this has not been explored within Stby yet.

Some limitations around referencing evidence with AI emerged as well. While AI proved adept at surfacing relevant data, its outputs still required human verification, as researchers had to double-check whether the supporting data matched the intended meaning and nuance. Additionally, tasks once delegated to junior or intern researchers, such as combing through transcripts or interview notes for supporting quotes, were now efficiently handled by AI, raising questions in the team about how early-career researchers gain hands-on experience in data handling and interpretation.

Discussion

Throughout these six projects, learnings of how collaborating with AI alters the experience of a qualitative research team emerged. Here, these overarching findings are discussed. As AI continues to evolve, the learnings are equally likely to change, but these points serve as a time capsule for the current experience of qualitative researchers with AI.

Becoming a Critical Curator

Working with AI can make some parts of the qualitative analysis process more efficient, but it also comes with new roles and responsibilities. Previously, researchers were responsible for generating every line of analysis, but now this job can be shared by humans and AI. The key skills needed from researchers may no longer be writing text from scratch, locating emerging themes in a data set or pulling out data for evidence, but rather the ability to discern relevant information, manage AI outputs, and maintain strategic analytical clarity. Part of this curator role includes being meticulous with keeping track of prompts and outputs to ensure both traceability and methodological rigor. Ideally this new skill is developed collectively by creating a shared workflow and sharing successes and failures of collaborating with AI.

For true collaboration, researchers should begin viewing AI not just as a tool for generating outputs, but as an active intelligent participant whose contributions enrich the research process. When researchers engage AI in collaborative thinking rather than only soliciting deliverables, the resulting insights tend to be richer and more context aware. One approach Stby has found effective for this level of collaboration is allowing AI to ‘listen in’ to meetings and fieldwork debriefs by recording calls or meetings with Gemini or ChatGPT. By including AI in discussions among researchers, it gains a seat at the table during key moments of analysis and deepens the team’s concept of AI as another teammate. The researchers are then responsible for critically checking the meeting summary created by AI to ensure it accurately reflects the conversation.

Working with Cognition Differences

Working with AI as a collaborator does not mean that AI is equal to a human collaborator. It’s important to recognise that ‘the human mind isn’t just predicting the next word in a sentence’ (Brooks 2024). This highlights the fact that human cognition processes are different from how AI generates information, and it’s essential to collaborate with that difference in mind.

All members of the Stby research team experienced “AI overload” at various points that was caused by being frequently confronted with enormous amounts of new data, generated far faster than human brains can process. Without time to let connections subconsciously form in the minds of the researchers, there is a major risk for overwhelm and cognitive overload. Even though AI can help with many tasks, the human researcher still holds responsibility and accountability for the final work, and thus are always under the pressure of reading, correcting and reworking AI’s outputs. Instead of being freed from the workload, the research timeline somehow feels more compressed due to AI’s speed. According to Rosa’s ‘social acceleration’ theory, technology innovation, social change and the pace of life mutually reinforce each other, leading to a constant sense of time pressure (Rosa 2015). This aspect of subjective experience of time is worth further exploring in the future.

Secondly, conversing with AI differs significantly from speaking with a human colleague. Unlike colleagues who defend their ideas and show their thought processes, AI operates as a black box with its own opinions/biases and non-transparent reasoning, hindering the essential back-and-forth communication vital for creating deeper meaning in qualitative research. AI generates content in a different way than human brains think, often making it challenging for researchers to follow the thought process. Human researchers can organically build on each other’s ideas through words or visuals, following thoughts more easily. Recognising this limitation, it is important to prioritize human-to-human moments during data analysis.

The need for researchers to spend energy curating AI-generated content and managing cognitive processing differences changes how teams go about data analysis. Stby is still exploring what this looks like, often re-adjusting on a project-by-project basis. Peer support both by colleagues and the wider community of ethnographers can be beneficial in navigating these changes together.

Taking AI’s Limits into Account

As with any new team member, a period of onboarding must happen. But ‘onboarding’ with AI now needs to happen at the beginning of every task. Giving context via prompting to AI is essential to receive the most accurate outputs. It’s like needing to imagine that a very capable but clueless person just joined the team. Likely, the team would need to provide them with the briefing, add them to meetings, give them notes and any other necessary details to make sure they are up to speed. Researchers may not be used to externalising their detailed thoughts and processes throughout a project like this, but it is precisely the new skill that is needed for effective prompting. In some cases, prompting is a helpful opportunity for researchers to articulate themselves during a project, but it can also be exhausting and poses a risk to break the state of flow researchers can experience during data analysis (Csikszentmihalyi 2008). Future development in agentic AI may bring change as teams develop AI agents specifically trained based on projects and workflows unique to that team.

Another limitation seen in the case studies is AI’s struggle to analyze image and video, due to file incompatibility and lack of being able to understand non-verbal cues. With this understanding, researchers must think carefully about what analysis task can be assigned to the AI collaborator and where to focus the human time and effort to achieve the most optimised hybrid-intelligence analysis plan.

In line with being aware of data formats and AI, it is also important to now think about the strengths and weaknesses of different AIs on the team. As with any team member, some AIs are better than others at certain tasks. For example, NotebookLM excels at staying within a defined dataset but lacks strong creative writing skills; Gemini is well integrated with Google’s ecosystem but offers inconsistent accuracy; and ChatGPT is strong in pattern recognition and creative synthesis but prone to making things up if not constrained. The wrong match between AI capability and a task, such as using a more creative model when a literal analyst is needed, can distort results or waste time.

Navigating New Team Dynamics

Current general-use AI platforms are designed for solo use, not allowing teams to simultaneously prompt or share outputs. This forces teams into workarounds like creating separate sharing documents, planning in-person prompting sessions to use a single device or screen-sharing in hybrid settings. These approaches are serviceable but often cumbersome and can slow momentum during analysis. A more subtle risk of this is fragmentation of team cohesion. As researchers grow more self-sufficient with individual AI workflows, the collaborative rhythm essential to qualitative analysis can be eroded.

To understand AI’s impact on team dynamics, it is important to look beyond individual projects and examine the underlying structures, workflow and culture that shape teamwork. Stby’s research teams typically consist of 2-4 professionally trained design researchers with varying levels of experience and seniority. When integrating AI into workflows like analysis, maintaining transparency and team cohesion are top priorities. Simply giving everyone access to AI is not enough. Stby fosters AI skill development through a safe environment for experimentation. Researchers prompt AI collaboratively, review its responses together, and invite it in discussions like a member of the team. Colleagues are open about their use of AI, seek second opinions when uncertain, and quickly share individual learnings across and beyond project teams to deepen overall learning. Workflows including AI are regularly updated, and new tools and features are reviewed collectively. Negative experiences such as confusion, overwhelm, or distrust are also discussed as a team for peer support. These practices build trust both between humans and AI, and among human team members. Reducing shame and focusing on values of open communication and shared learning are essential. Teams operating in environments with AI stigma face greater challenges integrating it effectively.

Defining When to Use AI and When not to

Deciding when to use AI is an essential part of the learning curve of hybrid-intelligence collaboration for individual researchers and teams. If relied on too heavily, the team risks losing ownership over the analysis process as well as harming team cohesion, as discussed earlier.

The decision of when to involve AI and when not to is dependent on a range of variables. AI’s own limitations in data processing plays a big role in this decision, as researchers must predict the extra manual work needed to collaborate with AI and weigh it against the timeframe available. And sometimes AI’s involvement is de-prioritized to give space for human-to-human interactions within the research team. A researcher’s own preference in developing ownership and/or experiencing moments of enjoyment in work can be reasons why AI is involved or not. The project team often collectively makes these decisions as they go. Looking back, using a framework to guide these decisions may help to act more strategically in the analysis process.

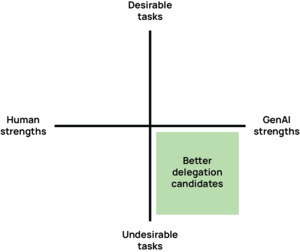

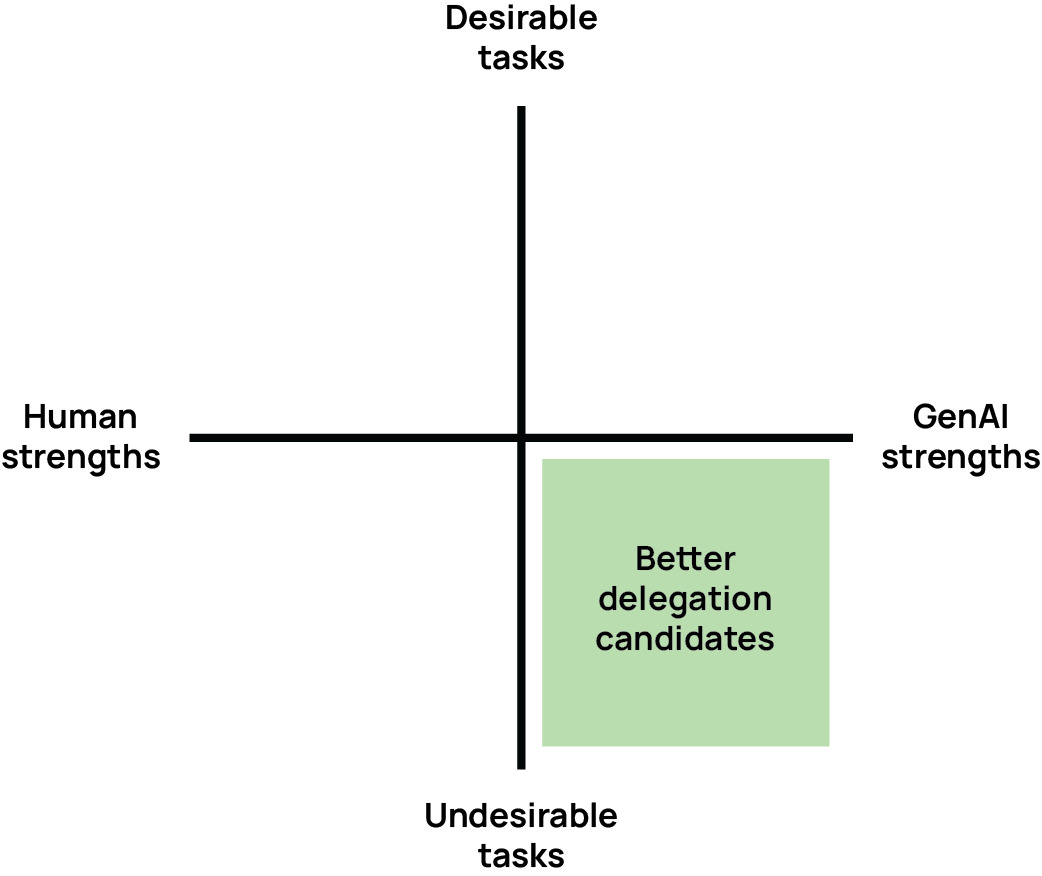

One framework that can offer a starting point on this is the Cognitive Offloading Matrix presented at the EPIC Conference 2024, where the human/AI strengths and the desirability of tasks form two axes (Hutka 2024) (Figure 3). According to Huka, the tasks that fit with AI’s strengths and are ‘undesirable’ for the human researchers are best suited to delegate to AI. Based on Stby’s case, how ‘desirable’ a task is for human researchers should be explored within the context of teamwork and individual team members’ strengths. Another framework explored by Beverly (2025) consists of a similar matrix with risk and complexity as its axes. Clearly, this is worth a wider conversation amongst the qualitative research community to further articulate what these axes would mean in practice.

Responding to Shifting Privacy Considerations

AI’s integration into qualitative research also brings new ethical complexities. This is particularly present when using general-use AI in analysis. This is why Stby favours enterprise-level AIs that guarantee stronger protections and do not use data for model training. Bringing AI into qualitative analysis has prompted Stby to adapt current data privacy protocols and participant communication. In addition to following GDPR, Stby has also updated their consent forms to include an explanation of how AI will be used, which includes details such as: all personal information will be removed by hand before AI is involved, only closed AI systems that do not use the data to train will be used, and nobody outside the research team can access the files within AI. If participants object to this, the team agrees to manually process their data. These new protocols are regularly reviewed as AI adds new features that impact participant data privacy.

Wider Changes in the Qualitative Research Field

It’s worth noting that there are wider trends in the field that influence human-AI collaboration in qualitative research analysis. Clients are increasingly aware of AI’s capabilities and may push for faster, cheaper turnarounds or even assume that qualitative research can be replicated through synthetic methods such as AI-generated users or AI interviewers (e.g., Outset.ai). This highlights the need even more for researchers to articulate the unique value of humans in qualitative research and explore and communicate the benefits of hybrid-intelligence collaboration with AI. Being transparent about how and when AI is involved in research helps to identify the strengths and weaknesses, opening up new conversations. This is comparable to the transition when qualitative researchers started integrating the internet and digital tools into their work at the beginning of 1990s. The challenge today’s researchers face is curiously similar to the ones faced by previous generations of knowledge workers in finding out how the technologies enhance and disrupt their work, as explored in the work of Zuboff (1988), Csikszentmihalyi (2008), Virilio (2012) and Rosa (2015).

Additionally, the shift towards human-AI collaboration is potentially problematic for the inclusion of junior and intern researchers, people who would typically help with tasks like data pre-processing, transcription summarizing, or evidence referencing. AI cannot take on these tasks and is faster in delivery compared to human counterparts. However, always giving this work to AI to do on its own risks depriving early-career researchers of formative hands-on experience to develop core analytical instincts, skills that will be needed to eventually collaborate with AI and critically curate information later in their career. One promising approach to combat this is co-prompting: pairing juniors and seniors to jointly guide AI analysis. This encourages skill exchange while preserving human oversight and keeps juniors engaged in substantive work while allowing them to practice critical evaluation of AI outputs.

Conclusion and Opportunities

Learn to Experiment, Experiment to Learn

Stby’s early adoption of generative AI began with small tasks, gradually evolving into a more collaborative relationship as the team explored its potential. Over time, dedicated members guided this evolution, facilitating reflection and ensuring the wider team stayed aligned. This steady, intentional approach anchored AI’s place as an intelligent team member rather than just a tool for efficiency.

Integrating AI into qualitative research analysis is less about finding a single formula and more about sustaining a culture of experimentation. Stby’s motto “learn to experiment, experiment to learn” has fostered the necessary openness, shared reflection, and willingness to navigate uncertainty together that is needed during significant technological transitions in the field. While every team will chart its own path, the value lies in committing to the process, embracing both successes and failures, and allowing collaboration with AI to mature over time.

Furthermore, Stby is actively taking a lead in conducting research with the Teaming with AI community, a global group of experts and practitioners, from research institutions to consultancies trying to explore the new rules of AI-enabled, human-centered teamwork. Input from knowledge worker teams in design, product/service innovation, technology development, organisational development and business strategy is helping build knowledge about how teams can work better with AI and guide practices for the future.

Signals for the Future

In the near and distant future, AI is scheduled to make incredible advancements that will force qualitative researchers to respond and adapt their practices. Soon, it may be possible to create specialized AI agents that can assist with research work. And new developments may make it possible to work with AI to analyze specific visual, non-verbal and contextual cues in data. Ideally, AI platforms will begin to prioritize collaborative environments so team members can work together on prompting and curation, and be better able to track outputs for transparency. These advancements will require a continued “learn to experiment, experiment to learn” mindset from researchers to understand how they may enhance and inhibit research practices.

Opportunities for Further Research

This paper presented the experience of one qualitative research team’s experience with integrating AI in their analysis process. These explorations brought forward many reflections on how human-AI collaboration enhances and hinders analysis and the experiences of researchers themselves. These reflections surfaced many topics for further research that would be fascinating to explore. This is a call to the community of qualitative researchers and theorists curious about the intersection of emerging technology, teamwork and qualitative research processes. The topics include:

-

The new skills for researchers to work with AI (e.g. curation, strategic AI output orchestration, developing AI agents, etc.)

-

AI’s impact on researchers’ sense of time

-

Influence of AI on skills of qualitative researchers (e.g. creativity, theme identification, writing skills, etc.)

-

Paradox of AI being used to save time resulting in overwhelm and overload

-

Team experience of training and collaborating with AI agents as a ‘teammate’ instead of a 'personal assistant

-

AI enhancing or eroding collective sense-making beyond data analysis, in particular in more future-oriented speculative knowledge creation work

-

New tasks for researchers to accommodate AI’s ever-changing capabilities and limitations

-

Role of junior and intern qualitative researchers in the age of AI

About the Authors

Katy Barnard is a Design Researcher at Stby in Amsterdam, part of the Quicksand design thinking and innovation agency with offices in Delhi, Bangalore, Goa, London, and Amsterdam. With expertise in qualitative research, design thinking, and digital design, Katy is passionate about exploring how digital technologies shape society and the environment. They have worked with clients including Spotify, Google, Ford, Amazon, What Design Can Do, and more.

Dr. Qin Han is a Senior Design Researcher at Stby in London, part of the Quicksand design thinking and innovation agency with offices in Delhi, Bangalore, Goa, London, and Amsterdam. She has extensive experience with qualitative user research for many international clients such as Google, Spotify, and the International Labour Organisation. In 2010 Qin was awarded the first-ever PhD in Service Design in the UK from the University of Dundee. She also holds a degree in Computer Science and Technology.

Ed Louch is a Design Researcher at Stby in London, part of the Quicksand design thinking and innovation agency with offices in Delhi, Bangalore, Goa, London, and Amsterdam. He has expertise in qualitative ethnographic research, product, and service design. He has a keen interest in understanding the relationship between design and human behavior, and the influences that technology has on society. Ed has been involved in projects for the Tony Blair Institute for Global Change (TBI), Google, YouTube, Spotify, IKEA, Sony, adidas, and many more.

Dr. Bas Raijmakers is the co-founder and Creative Director of Stby, part of the Quicksand design thinking and innovation agency with offices in Delhi, Bangalore, Goa, London, and Amsterdam. He has a background in cultural studies, the internet industry, and interaction design. His main passion is to bring people we design for into design and innovation processes using visual storytelling. He holds a PhD from the Royal College of Art and worked with many clients in the past 25 years, such as Nokia, Google, YouTube, Spotify, the Dutch Government, the United Nations and What Design Can Do.

Generative AI Disclosure

Gemini 2.5 Deep Research was used to generate some of the initial draft text in the literature review section. One author listed potential arguments based on a discussion with the other authors and asked Gemini to write a report covering literature that supports, contradicts or offers alternative hypotheses to these arguments. The instruction specifically asked Gemini to conduct research using only research papers, academic journals and/or research reports published since 2020, given that AI described before 2020 would have a very different nature compared to the generative AI used in these case studies. Some of the generated content was used in the initial draft as a placeholder, but most of the text was re-written or removed in the further iterations of writing. This was done after the author read the original papers and books referenced by Gemini and compared them to the fully written case study and discussion chapters. About half of the current references were identified by Gemini’s Deep Research, and the rest were supplied by research peers or come from the authors’ own collection of books and papers.

Gemini 2.5 was used to transcribe, take notes and write summaries based on meeting recordings between the co-authors at the start of writing the paper and again during later iterations. The generated content was mostly used for writing the case study and discussion sections. Some of the generated text was used in the initial drafts, but nearly everything was re-written or removed by the authors as the writing process progressed.