Introduction

In many organizations, the rush to adopt AI prioritizes speed and scale over purpose and fit. Technical upskilling is treated as the end goal, while deeper questions about context, including how, where, and why AI is used, go unanswered. Missing from this picture is the consideration of whose judgment shapes its application and what tradeoffs are being made in the process.

Helen Nissenbaum’s concept of contextual integrity (2010) informs this view of context: trust depends on keeping information flows aligned with established social norms, and breaking those norms, whether intentionally or through design oversight, can destabilize trust. This illustrates a broader gap between technical skill and contextual understanding, a gap echoed in participatory design (Schuler and Namioka 1993) and human-AI collaboration research (Amershi et al. 2019). These frameworks provide clear principles for responsible use, yet their application in practice is uneven and incomplete. Studies of AI deployment show that ethical frameworks are too often aspirational rather than embedded in operations (Fjeld et al. 2020; Mittelstadt 2019). Many initiatives focus on a single sector or phase rather than a full lifecycle (Brundage et al. 2020). Jobin, Ienca, and Vayena (2019) map the global landscape of AI ethics guidelines, showing how principles often remain aspirational. By contrast, the OECD (2019) highlights a governance challenge: while AI adoption is widespread, oversight structures and adaptive oversight mechanisms lag. Together they underscore why responsible AI requires not only strong principles, but also concrete pathways for operationalizing them.

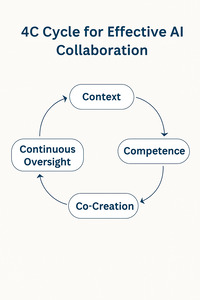

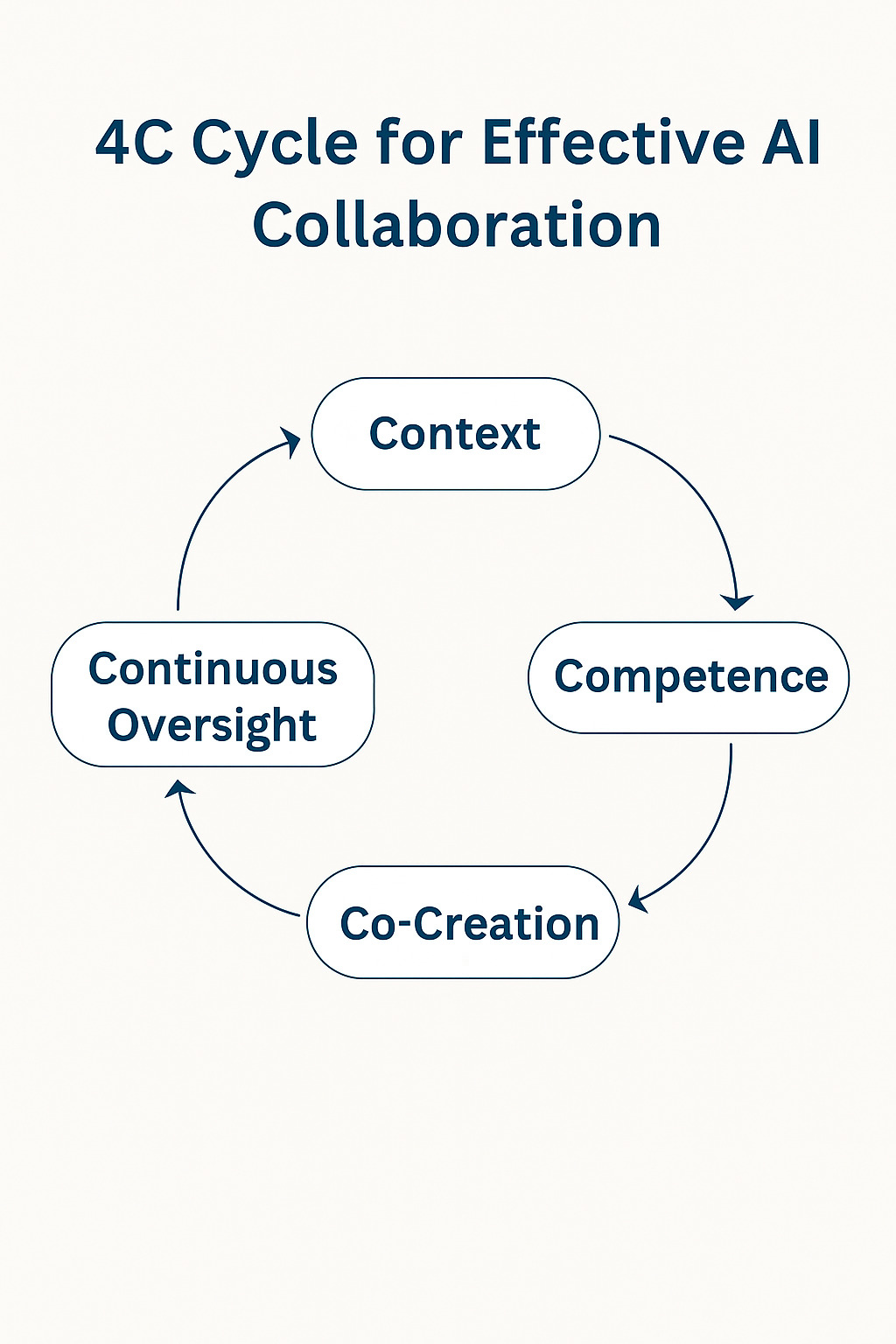

This paper offers one such approach: the 4C Cycle—Context, Competence, Co-Creation, Continuous Oversight. First developed in the Executive Playbook on Responsible AI Reskilling (Kang et al. 2025), I extend and operationalize it here through enterprise research at Salesforce (Kang 2025) and educator and small business sources. The paper begins with challenges in deploying AI tools responsibly, moves to prior literature, introduces the 4C Cycle, tests it across different sectors, and closes with ethical stakes, case studies and implications for practice.

Section 1: The Gap in AI Adoption

Deploying AI tools in complex organizations faces many challenges. Two of the most critical are the efficiency-effectiveness gap and the lack of embedded oversight. The 4C Cycle is designed to address these challenges specifically.

Defining Effectiveness

Effectiveness centers purpose over performance and strengthening human discernment, not just delivery. It requires measures such as interpretability, psychological safety, and long-term trust, not only throughput or time saved. Yet in many organizations, AI is judged only on technical accuracy and productivity gains. The rush to adopt new tools narrows attention to speed and automation, leaving questions of purpose and tradeoffs unasked.

In healthcare, education, public policy, and enterprise, efficiency-first approaches have already produced inequities and eroded trust. Later in this section, I contrast efficiency and effectiveness to show why the distinction matters and how it sets the stage for the 4C Cycle.

Consequences of Ignoring Context

Across sectors, these failures share a common thread: models reproduce harmful human patterns without sufficient analysis or oversight. In healthcare, a widely used risk algorithm systematically under-identified Black patients by using spending as a proxy for need (Obermeyer et al. 2019). Rajkomar et al. (2018) outline how to build fairness into ML for health care and why governance must address these biases. In education, automated essay scoring (AES) systems penalized creative writing and rewarded formulaic responses (Williamson, Xi, and Breyer 2012). Perelman (2014) critiqued AES systems directly, showing how these systems can be gamed by surface-level strategies while missing the depth and originality valued by human raters. In the public sector, the Dutch SyRI welfare fraud detection system was struck down for violating human rights after targeting low-income neighborhoods (Court of the Hague 2020; Human Rights Watch 2020). And in enterprise, the absence of clear context definitions leads to tools that fail to improve user outcomes (Kang 2025). Without continuous human oversight, such inequities are not corrected, but amplified.

Section 2: Literature Integration

This section enumerates the foundational and recent literature that informs the 4C Cycle, mapping each element to current research and sector-specific evidence. The research gap addressed by the 4C Cycle sits at the intersection of technology ethics, human-AI interaction, and participatory design, yet it remains unresolved in practice.

Contextual Integrity (Enterprise)—Helen Nissenbaum’s concept of contextual integrity (2010) offers a foundational lens for understanding why context-of-use matters. She argues that trust depends on the appropriate alignment of information flows with established norms, and warns of the risks when technologies disrupt these patterns. This concern is reflected in cross-country analysis by the OECD (2019), which reviewed AI adoption across multiple sectors and found that while enterprises are increasingly embedding AI, many still lack the governance structures, lifecycle oversight, and adaptive capabilities needed to scale responsibly. For large organizations, this governance gap reinforces the importance of the Context and Continuous Oversight elements of the 4C Cycle, making clear that effective oversight must be embedded from the outset and continuously adapted to context-specific risks.

Human-AI Collaboration (Cross-Sector)—Amershi et al. (2019) outline guidelines for effective collaboration between people and AI systems, including clarifying roles, ensuring interpretability, and creating feedback loops. These principles map directly to the Competence and Continuous Oversight elements of the 4C Cycle, but they are often deprioritized in favor of rapid deployment and perceived efficiency gains.

Participatory & Stakeholder Design (Small Business)—Participatory Design and Value-Sensitive Design emphasize embedding stakeholder values throughout the design and implementation process. These approaches align with the Co-Creation element of the 4C Cycle, which calls for involving those most affected in shaping how AI is introduced and maintained. In practice, sustained stakeholder participation is rare. Small businesses may be susceptible to “ready-made” AI tools that do not reflect their operational realities, creating barriers to trust and adoption. Ghouse (2025) introduces an AI Democratization Model that addresses these barriers by offering structured, accessible strategies for SMEs. While not framed explicitly as phased adoption, the model highlights incremental approaches that support competence-building and contextual fit over time.

Competence in Education—In education, similar challenges emerge when faculty are encouraged to adopt AI without adequate guidance or opportunities to co-develop policy and practice. Studies on automation bias show that people tend to over-rely on plausible but incorrect outputs (Goddard, Roudsari, and Wyatt 2012). Human-AI collaboration research reinforces the importance of building users’ capacity to interpret and challenge these outputs, which is a central aim of the Competence element of the 4C Cycle. Recent research (Faculty Focus 2024) found that prompting strategies designed for open-ended, reflective engagement improved students’ critical thinking, analysis, and ethical reasoning. This reinforces the point that discernment grows through deliberate, guided interaction, not just tool use. A 2025 MIT Media Lab study on “Your Brain on ChatGPT” found that, under the study’s conditions, participants who consistently relied on large language models (LLMs) for writing tasks showed weaker brain connectivity, lower memory recall, and reduced ability to accurately cite their own work compared to those who worked without AI assistance. The authors do not claim that AI inevitably reduces cognitive performance. Instead, the findings suggest that without intentional scaffolding, over-reliance may create cognitive costs that work against educational goals. This highlights the need for AI-enabled learning environments to deliberately cultivate competence by using AI in ways that challenge, rather than replace, core cognitive processes.

By synthesizing these strands of scholarship, the 4C Cycle offers a cross-sector, operational framework for embedding values and human judgment into everyday AI adoption.

Section 3: The 4C Cycle—Context, Competence, Co-Creation, Continuous Oversight

When AI is framed only through the lens of speed or fairness, we lose sight of its varying levels of agency and the corresponding levels of human responsibility required to guide it. In the Executive Playbook on Responsible AI Reskilling (Kang et al. 2025), co-authored following the Stanford course,⁵ we introduced a spectrum of AI autonomy to help executives and teams better match oversight to capability. This spectrum was informed by enterprise observations and by interdisciplinary readings, seminar debates, and conversations with Stanford faculty that shaped our post-course collaboration as alumni.

Most enterprise deployments today live in the mid-level, what we call the “messy middle,” where AI appears assistive, but decisions are still shaped by invisible human workflows, defaults, and institutional norms. This middle zone is also where ethical ambiguity often lives. It is where alignment with purpose matters most.

Matching oversight to capability is only half the challenge. We also need to decide what we optimize for. The next table contrasts efficiency and effectiveness to make success criteria explicit (Kang et al. 2025).

From Speed to Stewardship: Applying the 4C Cycle

To close the efficiency-effectiveness gap, organizations need a repeatable framework that cultivates judgment as a core practice. The 4C Cycle offers a path for embedding these practices into everyday work, beginning with Context.

1. Context: Why This, Why Now?

As outlined earlier (see Section 2; Nissenbaum 2010), trust depends on aligning information flows with established norms, and breaking those norms destabilizes trust. In practice, context is the set of relationships, expectations, and constraints that give AI systems meaning and legitimacy in a specific setting.

Too often, enterprise AI rollouts begin with a mandate to automate but skip the deeper why. What is the purpose of this tool in this environment? What human value do we hope to unlock? Without shared context, such initiatives risk misalignment, confusion, or resentment.

Defining context is also a choice about inclusion and power. It determines who is invited into the conversation early, whose needs are prioritized, and who pays the price when risks are overlooked. Because context works best when it includes those closest to the work, this is also where the 4Cs begin to overlap. Co-creation can and often should be built into this first stage to ensure that context is relevant, grounded, and actionable.

2. Competence: Building Discernment

In many workplaces, AI training centers on mechanics: how to write the perfect prompt, set up an automation, or spin up a quick draft. But competence is not about speed; it’s about discernment. True competence comes from practice and support that help people move beyond awkward trial-and-error into real fluency. And fluency makes us more effective in areas that AI still struggles with: interpreting ambiguous outputs, spotting bias or misinformation, knowing when not to use AI, and pausing when something feels “off.”

The educator I interviewed described what happens when this sequence is reversed. Students are learning to generate essays with chatbots before they have developed the foundational skills of revision and critique, skipping the development of critical judgment altogether. The same pattern is showing up in the workplace. Entry-level professionals are expected to use AI before developing core critical thinking and analytical skills. That expectation is not just premature, it is risky. Competence requires giving people the space and time to develop discernment, and it grows fully when they are also engaged in shaping the systems themselves, a theme explored in the next section on co-creation.

3. Co-Creation: Designing With, Not For

Efficiency assumes tools are ready to deploy; effectiveness assumes tools must be shaped with the people who use them. Too often, AI adoption decisions are made far from the people who will live with the consequences. That distance leads to tools that do not fit real workflows, frustrate users, and erode trust.

Co-creation flips that script. It brings the people closest to the work into the design and testing process early. In under-resourced environments, co-creation isn’t optional. It’s essential. In small businesses, for example, leaders often emphasize that they need margin, not more tools. Because they operate with little margin for error, ethical alignment is a survival issue, not an abstract one. In classrooms, involving students in defining what ethical AI use looks like helps ensure that guidelines reflect actual learning contexts and encourage shared accountability between instructors and learners.

The 4C Cycle draws from feminist and participatory design traditions (e.g., Schuler and Namioka 1993): the idea that legitimacy comes from experienced or lived input. Small business and nonprofit settings offer an especially valuable testing ground for this approach. Rather than assuming a training model, future pilots could explore how the framework holds up in real-world scenarios, with revision as part of the process.

As hinted in the Context-setting phase, Context and Co-creation can be recursive. Insights from co-design sessions can reshape our understanding of context, which in turn influences the next design decisions. Recognizing this dynamic is key to keeping the 4C Cycle adaptable rather than static.

4. Continuous Oversight: What We Measure Is What We Value

The most overlooked stage of AI adoption is what happens after deployment. Governance often focuses on throughput, the number of prompts, time saved, but those metrics don’t capture whether people trust the system, understand it, or feel safe using it.

Measuring effectiveness requires new indicators, including:

-

Interpretability: Can people understand and question the output?

-

Psychological Safety: Can users experiment without fear of blame?

-

Ethical Friction: Are teams empowered to name when something feels wrong?

Oversight also depends on baseline safety considerations, such as:

-

Protection from bias, harm, or hallucination.

-

Permission to be messy in order to learn, fail, and improve together.

This is especially true in classrooms, where the “right” answer may not exist, and in small businesses, where a single bad AI call can lose customers in settings with slim margins and no recovery systems in place.

A core principle is that oversight depth should scale with autonomy: at low levels, use spot checks and rationale checks; at mid-level, use review gates, feedback loops, and audit logs; at high levels, maintain human-in-the-loop supervision with rollback plans and incident review.

Our research team at Salesforce established a set of social norms to help the team put these principles into practice by distinguishing what is acceptable from what crosses the line. Oversight begins with making these questions visible, a theme I return to in the case study on building social norms.

Because every organization operates differently, effective oversight must go beyond generic audits and dashboards. It has to be participatory, iterative, and grounded in live feedback, constantly checking not just if the tool is working, but if it’s working for the people who depend on it. Continuous oversight keeps the cycle alive by ensuring that adoption remains an ongoing practice of reflection and adjustment. The insights gathered through this stage then feed back into the first stage, refining the organization’s understanding of Context for the next iteration.

With these foundations in place, the following sections show how the 4C Cycle comes to life in various contexts.

Testing the Cycle across Sectors

In enterprise, the 4C Cycle began as a strategy for reskilling around agentic AI. It emerged both from practitioner needs and from the Stanford program’s call to approach technology and AI in particular with ethical and sociotechnical awareness.

In education, the framework was refined through an in-depth interview with an instructor, which served as a key case study for navigating curriculum change, moral uncertainty, and student anxiety. It highlighted where contextual understanding and competence intersect, and where gaps remain in AI-related pedagogy.

In small business contexts, the model raises hypotheses worth testing: how might the 4C Cycle adapt to real-world decisions, tradeoffs, and operational constraints documented in AI adoption research? One open question is whether certain elements need to be simplified or re-sequenced for smaller teams. These are avenues for future exploration rather than established findings.

This research design envisions each sector as a distinct environment while also allowing cross-comparison of how Context, Competence, Co-Creation, and Continuous Oversight operate under different constraints. Possible methods include pre- and post-engagement interviews, observation of tool use, and participant feedback on perceived effectiveness, though these remain future directions rather than current practice.

The 4C Cycle is not static. It is meant to be shaped by those closest to the work, reflecting its own principles of judgment, collaboration and care.

To make the Cycle usable, each “C” can be expressed as a heuristic, a quick reference point for practice:

-

Context: Ask how authority, history, and environment shape this AI use.

-

Competence: Judge outputs not only for quality, but whether they build discernment.

-

Co-Creation: Design with those who live the impact, not only those who deploy the tool.

-

Continuous Oversight: Plan for reflection and accountability after launch, not just at rollout.

Section 4: Grounding the 4C Cycle in Practice

Enterprise Case: Social Norms for Effective AI Use

The Salesforce product research team developed social norms to help build trust and guide responsible AI use. These norms emphasize three principles:

-

Authentic Human-Centered Curation: AI should support, not replace, the contextual framing and rich nuance that researchers bring.

-

Cultivating Thoughtful Engagement: Researchers should deliberately slow down to interrogate AI outputs, building “productive friction” into analysis so that biases, shallow patterns, and gaps are surfaced.

-

Alignment and Transparency: Stakeholders need clarity on how AI was used and why.

To translate these principles into practice, we recommend using AI as a skeptical assistant. In this workflow, the researcher first forms their own hypothesis or point of view, then uses an AI model to challenge their conclusions by asking questions such as, “What alternative interpretations exist?” or “What evidence might be missing?” The researcher then validates or refines their perspective using embodied knowledge, contextual cues, and direct observation. This approach treats AI as a catalyst for deeper human analysis, not a shortcut.

As a long-time journalist, I was trained to remain objective. Over time as a researcher, I have learned that strict neutrality can dilute the stakes of a decision and limit its impact on enterprise product decisions. Research can sometimes deliver volumes of findings that are technically correct yet too generalized to drive meaningful change. Researchers call this ‘True But Useless’ (TBU): findings that are technically correct but fail to guide meaningful action. It mirrors the efficiency trap introduced earlier. Human research, by contrast, can contribute a distinctive point of view. That perspective surfaces tradeoffs, elevates emotional stakes, and clarifies whose experience counts.

A gap has emerged between AI experimentation and proven impact. While industry reports like McKinsey’s Global AI study show that enterprises are busy designing workflows and retraining staff (McKinsey & Company 2025), the benefits are often undercut by a common set of tensions. Our own research internally and with our customers confirm these challenges.

-

Speed vs. Rigor: Faster outputs often come at the cost of nuance and quality.

-

Devaluation of Expertise: Domain knowledge, judgment, and creative craftsmanship risk being replaced by generic outputs.

-

Lack of Shared Norms: Teams lack agreement on when, where, and how AI should be used.

-

Efficiency Paradox: Gains are eroded by rework and quality assurance when AI outputs are inconsistent.

-

Experimentation Costs: New tools introduce hidden burdens, from vetting and integration to risk assessment.

-

Shifting Roles: Many positions now require both deep subject expertise and AI fluency without clear recognition or career pathways.

-

Competency Uncertainty: Teams are unsure what AI mastery looks like or how it will be valued.

-

Ethical and Environmental Concerns: Staff sometimes feel complicit in deploying tools with potential harms.

While these findings come from a large enterprise setting, a review of education and small business literature reveals similar patterns: adoption without shared norms, uncertainty about where human judgment matters most, and hidden costs that complicate the promise of efficiency. These recurring themes suggest that the 4C Cycle applies across sectors and lead to the ethical question explored in the next section: what tradeoffs are we willing to accept for speed, and who bears the cost?

Section 5: Ethical Stakes

My ethical orientation toward technology was profoundly influenced by Professor Rob Reich’s opening lecture in the Stanford ETTP course, where he introduced Ursula K. Le Guin’s The Ones Who Walk Away from Omelas Le Guin 1973).[1] In the story, the prosperity of an entire city depends on the hidden suffering of a single child. That stark image of concealed harm and collective complicity serves as a framing lens for this paper: What tradeoffs are we quietly accepting in the name of speed and convenience.

As a parent of a college sophomore and a high school senior, I see those tradeoffs showing up acutely. The rise of AI introduces new questions and new tradeoffs, about how young people can find meaningful work and a sense of belonging in a workplace transformed by automation.

Educators are being asked to lead through this uncertainty, preparing students for tools they are still learning themselves. These generational questions aren’t tangents. They are central to what responsible AI adoption means.

Careful evaluation of AI is not a slowdown. By looking beyond easy wins like productivity, we can help people stay capable, curious, and grounded as emerging intelligences reshape how we live and work.

A Call for Moral Clarity

In the real world, the cost of skipping oversight is not hypothetical. When social media was launched, it was framed as neutral infrastructure for “connecting the world.” Few stopped to ask how it might amplify harm. The result was a system where outrage often spread faster than truth, reshaping politics, trust, and public life. The social and ethical blind spots weren’t abstract. They played out in concrete ways:

-

It accelerated the spread of misinformation (Tufekci 2015)

-

It contributed to widespread mental health harms, particularly among young users (Twenge 2020)

-

It deepened polarization through algorithmic systems (e.g. Noble 2018)

-

It entrenched business models built on data extraction and surveillance (e.g. Noble 2018; Reich, Sahami, and Weinstein 2021)

Evidence is mixed and evolving, with the U.S. Surgeon General warning of potential harms (2023), while large-scale analyses find small average associations (Orben and Przybylski 2019) and reviews call for stronger methods (Odgers and Jensen 2020). These are not random accidents. They grew out of choices made quickly, at scale, without moral clarity.

We see the same governance gaps in AI deployment today. Oversight mechanisms often fail to anticipate downstream harms or adapt to changing conditions because they are treated as static compliance steps rather than adaptive, collaborative practices.

These blind spots are not unique to social media. A satirical academic paper titled A Mulching Proposal: Analyzing and Improving an Algorithmic System for Turning the Elderly into High-Nutrient Slurry makes this risk vivid (Chouldechova et al. 2021). Written by an interdisciplinary group from the University of Washington, the paper uses ethical satire to critique the FAccT framework (Fairness, Accountability, Transparency). Their argument is that if we optimize for technical fairness without moral clarity, we can justify horrifying outcomes.

If efficiency is all we measure, we shouldn’t be surprised when the results are dehumanizing. Sometimes the problem isn’t just how the sausage gets made. It’s that people are being made into sausage.

The 4C Cycle is designed to close that gap. By making Context, Competence, Co-Creation, and Continuous Oversight explicit, the model embeds governance as an ongoing, participatory process rather than a box-checking exercise. It shifts the emphasis from reacting after harm occurs to actively shaping AI use so that it reflects lived realities, anticipates change, and safeguards human dignity from the start.

In practice, closing this gap may require deliberate slowness such as phased adoption periods before full deployment. That could take the form of small-scale pilots, “train the trainer” programs that build internal champions, or rotating feedback cycles where frontline users help shape ongoing changes. Scaling this kind of participatory governance isn’t simple, but neither is repairing trust after harm has occurred. The challenge ahead is figuring out which of these approaches can be adapted to fit different organizational sizes and sectors without losing the depth of oversight they require.

As AI tools enter the classroom, the clinic, and the customer service line, the imperative is clear: center human judgment from the start. That means investing in culturally competent datasets, inclusive and interpretable interfaces (Amershi et al. 2019), governance models that protect public interest (e.g., Brundage et al. 2020), and education that fosters discernment as well as technical skills (Selwyn 2019).

Section 6: Case Studies by Sector

The case studies that follow illustrate how Context, Competence, Co-Creation, and Continuous Oversight translate into practice in enterprise, education, and small business environments.

Enterprise: Content as a Strategic Asset

Automation promises faster decisions, lower costs, and more output, but in enterprise content creation, more is not always better. Excess content can obscure critical information, increase maintenance burden, and create conflicting or outdated guidance. At Salesforce, I work with hundreds of writers, strategists, and content designers whose work shapes the customer experience (Kang 2025). Because these practitioners are embedded in product teams, they anticipate needs and design information that enables action.

AI systems, by contrast, are completion engines. They can synthesize at speed, but they lack the situational awareness to distinguish what matters in specific use cases. This mismatch is visible in one of our most common support issues: password resets. Across our ecosystem, more than 1,500 help articles, macros, and technical documents reference logins or resets. Yet AI-generated summaries often blur important distinctions—for example, between mobile login errors, admin resets, and multi-factor authentication issues. When guidance is vague, incomplete, or buried in the wrong document, a simple task becomes a crisis. In urgent moments, customers turn to human agents not by choice, but because the system failed to meet them where they were.

To address this, we are auditing password-reset content and evaluating real customer utterances to align guidance with the actual job to be done. The goal is to reduce unnecessary support cases and improve the customer’s ability to solve problems quickly and confidently.

This case illustrates two stages of the 4C Cycle. Context: defining what customers truly need in this environment and which distinctions matter. Continuous Oversight: evaluating effectiveness not by throughput but by whether people can act with confidence. Co-creation with embedded writers and frontline agents strengthens both stages by grounding decisions in lived workflows.

The lesson extends beyond this use case: documentation is not a static “support artifact” but part of the product experience itself. When AI-generated content is misaligned, even the best technology can fail. AI models often optimize for surface-level accuracy, producing outputs that look correct but lack depth or relevance. This creates an opportunity: as AI handles routine synthesis, writers and researchers can focus on shaping trustworthy, context-aware experiences. The emerging skill is not simply generating text but refining and shortening it strategically to ensure clarity and anticipate a customer’s next question. By pairing AI’s speed with human judgment in tone, nuance, and intent, we can move from high-volume, low-value “slop” toward thoughtful applications that promote learning, adaptation, and deeper insight. In this way, AI becomes more than a generator; it becomes an effective collaborator.

Education: Building Competence Through Discernment and Reflection

The focus of AI adoption often lands on executives, engineers, and enterprise teams. Yet some of the most critical stewards of responsible AI use are educators who are preparing students for a future defined by generative technologies. In many cases, the same educators are still learning to navigate AI tools themselves. Many are asked to integrate AI tools under unclear policies and evolving expectations. The challenge is not just technical fluency but teaching discernment: knowing when to use AI, when to question it, and when to walk away. As the 4C Cycle emphasizes, competence without context is dangerous.

As I explored earlier through The Ones Who Walk Away from Omelas, progress can come with hidden tradeoffs. In education, these tradeoffs can mean sacrificing depth of learning for convenience. I ask: what are we asking teachers to give up, and who bears the cost? Emily Anderson, English department chair at the University of Southern California, explored similar themes in a freshman seminar on Frankenstein, using the text to interrogate ambition, agency, and the consequences of building without care. Studying the original language which can be dense, and layered with meaning demands interpretation and debate. This helps students practice the kind of judgment that AI shortcuts and summaries cannot foster. Ready-made answers may be convenient, but they undercut the deep knowledge that comes from wrestling with complexity. Anderson’s approach helped inspire both the Executive Playbook (Kang et al. 2025) and the 4C Cycle.¹

Case Study: AI in the Philosophy of Video Games

University of Washington philosophy professor Ian Schnee describes himself as a technology enthusiast.² He uses AI for administrative tasks, research, and lesson preparation, and encourages students to use it as well. He serves on three university working groups focused on AI usage and policy. Still, he notes wide variation in how his colleagues approach AI and believes students will adapt to these tools much faster than faculty.

In one of his upper-level classes, Philosophy of Video Games, students acted as consultants to game developers. Their assignment was to use AI to help draft ethical policy analyses on issues like exploitative monetization strategies or toxic online behavior. To prepare, Schnee built team-based learning skills throughout the quarter. Many students were unfamiliar with policy writing, but with access to AI tools, they could brainstorm together and scaffold their understanding.

“Using AI to do this sort of writing isn’t robbing students of agency. It’s helping them learn how to use AI as a collaborative partner and they learned a ton.”—Ian Schnee

He described the classroom energy as “electric.” His takeaway: when AI is combined with intentional pedagogy, it doesn’t make students lazier, it makes them braver. Schnee’s approach illustrates how competence develops when AI use is paired with guided practice, a lesson that applies far beyond the classroom. As educators across disciplines face pressure to adopt AI without clear guidance, his model shows how structured, collaborative experimentation can cultivate discernment rather than erode it.

Case Study: Tutorbots and Discernment in Logic

In his Introduction to Logic course, Schnee built a custom AI tutorbot for homework support, with in-person exams ensuring learning, not just completion. Weekly reflection prompts: “Am I using this tool to learn, or to shortcut the process?” reinforced the same discernment skills at the heart of his philosophy course.

“It’s not just about knowing what AI can do, it’s about learning when to trust yourself over the tool.”—Ian Schnee

He has taught this course for more than a decade. Before the pandemic, midterm scores averaged a B–. After COVID, they dropped to a C–. But this past year, with AI-assisted learning and weekly “learning how to learn” segments, student performance exceeded pre-pandemic levels for the first time. He credits the turnaround in part to short 15-minute lecture segments on metacognition, memory formation, the hippocampus, and growth mindset paired with AI-enabled tasks. The combination, he believes, has helped his students become more capable, self-directed learners.

Small Business: Closing the Digital Divide with Values-Aligned Adoption

Small businesses and nonprofits make up a significant portion of the global workforce. Nearly half of all U.S. private-sector jobs are from small businesses and they play a critical role in local economies (U.S. Small Business Administration, Office of Advocacy 2025). Yet their AI adoption challenges differ sharply from those in large enterprises: limited staff, tight budgets, and little capacity for experimentation. In these environments, ethical alignment is not an abstract concern but a survival issue.

Katherine Lee, a board member of SF New Deal³, a nonprofit that supports historically under-resourced small business owners in San Francisco, notes that these organizations are not simply smaller versions of corporations. Their priorities often balance efficiency with community relationships, experimentation, and adaptability. Under-resourced business owners face the dual challenge of sustaining daily operations while also trying to build shared systems without dedicated AI specialists.

AI offers potential benefits. It could streamline back-office tasks, simplify legal language, translate community needs into policy proposals, and aid in financial planning and succession. The greater challenge is implementation: using these tools well requires time, skill, and a tolerance for iteration that many small teams cannot afford. Structural constraints also shape adoption. Minority-owned businesses and those operating in non-English languages face barriers not only of cost, but of trust, accessibility, and data representation. If trained on biased or exclusionary data, AI risks reinforcing the inequities these organizations work to overcome.

Still, the potential for creative application is real. AI could serve as a kind of “companion,” able to surface ideas, reframe problems, and spark experimentation. For many small business owners, the value lies less in formal training and more in having the confidence to ask questions and learn by doing. When they have access to advanced models, not just freemium versions, they can benefit from multilingual communication, simplified legal interpretation, and more efficient operations, while still facing the same risks of hallucination and bias as larger enterprises.

This is where discernment becomes a superpower. The goal is not blind adoption, but values-aligned integration, knowing when to trust the model and when to question it. To explore this in small business settings, future pilots could test the 4C Cycle under real-world conditions. Such efforts would assess how well the framework aligns with small business priorities and whether conversational interaction with AI might lower barriers to entry. If effective, these approaches could help smaller organizations leapfrog some adoption hurdles, redefining what thoughtful, responsible AI use looks like from the ground up.

Section 7: Implications and Action by Audience

The 4C Cycle is only as useful as the contexts in which it is tested. Its implications therefore vary across audiences, from large enterprises to classrooms to smaller organizations. The framework begs for further testing, and each audience offers distinct possibilities for refinement. One next step is to evolve the 4C heuristics introduced earlier into tailored tools for different contexts. These would be quick, usable guides that preserve rigor while helping practitioners act in the moment. Over time, these heuristics could grow into a scorecard or evaluative tool that helps practitioners assess practice consistently across settings.

For nonprofits and small businesses, these ideas remain hypotheses. Early conversations suggest interest, but the 4C Cycle has yet to be tested in resource-constrained environments. The next step is not to prescribe solutions but to partner with organizations willing to experiment, adapting the frameworks into their realities.

Enterprise: Embedding AI Responsibly into the Customer Experience

-

Context: Align AI initiatives with both measurable business outcomes and human-centered goals such as trust, clarity, and equity in customer interactions. Establish and communicate team-wide norms for AI use to ensure consistency, trust, and ethical alignment.

-

Competence: Equip employees not only with prompt engineering skills but with the ability to recognize when AI outputs are incomplete, biased, or misaligned with customer needs. Encourage practices such as comparing AI summaries against actual customer utterances.

-

Co-Creation: Involve frontline teams such as writers, support agents, product managers, early in tool design and workflow integration to surface context-specific needs.

-

Continuous Oversight: Move beyond throughput and cost savings as success signals; include metrics that capture decision quality, user satisfaction, and the integrity of customer experience.

Education: Cultivating Discernment as a Core Competence

-

Context: Define clear pedagogical goals for AI use, ensuring tools serve the broader learning objectives rather than displacing them.

-

Competence: Pair AI-enabled assignments with reflection exercises that help students evaluate when to trust, refine, or reject outputs.

-

Co-Creation: Involve students in defining what responsible AI use looks like, fostering shared accountability between instructors and learners.

-

Continuous Oversight: Involve students and faculty in ongoing assessment of AI’s role in the classroom, creating shared responsibility for its ethical and effective use.

Small Business: Lowering Barriers Without Lowering Standards in AI Adoption

-

Context: Target AI adoption on high-impact pain points that free capacity for relationship-building, creativity, or experimentation.

-

Competence: Build AI literacy through small, applied steps using real operational tasks, allowing teams to learn by doing while maintaining quality control.

-

Co-Creation: Pilot tools with staff or community partners to ensure alignment with lived needs and workflows.

-

Oversight: Create lightweight, repeatable review routines (like weekly spot checks) to catch and correct issues before they impact customers or operations.

Conclusion: From Efficiency to Effectiveness

The real question is not whether AI can help us work faster, but whether its outputs are aligned with human goals, reflecting the practical realities, values, and constraints of the people it affects. Across enterprise, education, and small business contexts, the rush to adopt AI tends to reward speed and volume at the expense of discernment and trust. This efficiency-effectiveness gap represents both an operational and ethical failure.

The 4C Cycle: Context, Competence, Co-Creation, Continuous Oversight offers a practical, adaptable path for closing this gap. It equips enterprises to embed AI without eroding trust, helps educators cultivate discernment as a core competence, and enables small businesses to lower adoption barriers without lowering standards.

At the center of this shift is moral clarity. Alignment is not automatic; it must be designed, tested, and continually adjusted through governance that stays close to the work.

This work ahead is to keep testing, adapting, and building the 4C Cycle as both a framework and a practice. Effectiveness will not come from speed alone, but from collaboration, care, and the discipline of aligning AI with human dignity.

About the Author

Sabrina Kang is a Principal Researcher at Salesforce, focusing on content and content strategy. A former journalist, she values clear writing and supports creative practitioners through her research and practice. She can be reached at sabrinakang01@gmail.com

Disclosures

The author is a full-time employee of Salesforce. The views expressed are the author’s own and do not necessarily reflect those of Salesforce or any other organization. No other competing interests are declared. No external funding was received. Interviews with named individuals were conducted with permission to be identified.

Use of AI tools: This paper was developed with the assistance of AI tools, including Google Gemini and OpenAI’s GPT-5, for meeting and interview summaries, organization, diagram development, and language editing. Literature searches were supplemented using Google Scholar. All AI-generated suggestions were reviewed, verified against sources, and revised by the author.

Rob Reich, lecture for the Stanford Ethics, Technology & Public Policy for Practitioners course, 2024.