intelligences, Intelligence

Across industries, the term intelligence has been narrowed to describe a particular ideal: the capacity to anticipate and shape outcomes through algorithms, analytics, and prediction (Ferraris 2024). In this vision, intelligence is not about curiosity or navigating complexity, but about reducing uncertainty to manageable variables—spotting patterns before they materialize, converting ambiguity into calculated risk, and optimizing processes toward pre-defined ends to maximize profit (Eubanks 2018; Pasquale 2015). It is a managerial and technological aspiration that privileges foresight, control, and efficiency as the highest form of knowing and acting, and treats other ways of knowing as noise (Govia 2020).

It is this singular, outcome-oriented vision that I call Intelligence with a capital “I” (Mackenzie 2017). Not a context-dependent capacity, but an industry paradigm: a way of thinking, building, and governing that treats intelligence as a scalable infrastructure for prediction. The rise of AI has consolidated this view, promising to operationalize it at unprecedented speed and scope (Munk, Knudsen, and Jacomy 2022).

This paper emerges from a concern with this singular, outcome-oriented understanding of intelligence and with the ways it tends to render other forms of intelligence invisible. First, I problematize Intelligence by placing it in a historical context from an anthropological perspective. From a bird’s-eye view, I situate it alongside other universalizing logics that have shaped how we have imagined and pursued change and improvement to question its premises and hint at what might be at stake if we too readily believed in them.

Then I zoom into a strategic design research project my consultancy team carried out for a multinational company to illustrate the tension between Intelligence and intelligences. I focus on how the company’s AI-driven “sales Intelligence” efforts collided, in practice, with forms of intelligence that its sales agents cultivated through years of embodied practice grounded in rhythms and interactions that the algorithms could neither measure, predict, nor replicate.

This tension first surfaced during a design sprint review for the company’s customer relationship management (CRM), conceived as the brain of its sales Intelligence and the foundation of its future sales strategy.[1] As the head of the sprint tried to impress a room full of business executives on how the CRM’s AI-driven lead creation and tracking would guide the sales agents’ next steps, the only two who shook their heads in doubt were those who were once sales agents themselves and knew what it was like to sell. One of them said, “It looks good and impressive, except it is all in a straight line, but our clients never move in straight lines, nor do our sales agents.”

The resulting silence and visible discomfort among the execs were not simply reactions to facing a classic organizational scenario of old habits vs. new tools or resistance to innovation. It was—as I will show—a clash between two regimes of intelligence: one oriented toward prediction and control, the other toward attunement, responsiveness, and negotiation of possibilities (Siri, Olcese, and Spulber 2024). I take this case—which may sound utterly familiar to many—as a point of departure to show how the ever-widening gap (in practice, value, and visibility) unfolds between Intelligence and intelligences and propose two theoretical frameworks to think with and make sense of the multiple and sometimes competing regimes of intelligence at play in organizational life.

By tracing this gap, I don’t so simply aim to reveal the difference between Intelligence and intelligences—I think it is a rather clear difference for the kind audience that this paper addresses. Ferraris (2024), for instance, talks about it as the difference between “performative intelligence”—the kind AI systems simulate, which tend to encode the ontologies of capitalism by optimizing for speed, efficiency, and scale, and flattening everything else to this end (Sheldon and Kumar 2024)—and “living intelligence,” which is metabolically costly, socially embedded, and inherently partial. I also want to show the difference that difference makes; in other words, why this gap matters for the futures we design in the industry and beyond, and how we can bridge it. That is where I position ethnography—and us, the ethnographers—as a perennial frontier (Little 2001): an analytical approach capable of holding open spaces of negotiation where different regimes of intelligence can speak to one another without flattening life into a single, totalizing logic. In this sense, practicing ethnography in industry in times of Intelligence becomes a practice of ethical and political refusal (Simpson 2014): one that defies unilinear paths to tackle the complexity and diversity of lived experience.

The Problem beyond the Hype

Not a day goes by without yet another headline, email, or LinkedIn post about the latest breakthrough related to Generative AI. In this endless stream, GenAI is cast as the natural heir to human intelligence—only faster, sharper, more efficient, and exponentially more capable, and more so with every passing day. The future is cast as one of Intelligence, and we are all urged not to fall behind or face the consequences; this is the worst the AI will ever be, say the advocates, faithful to a narrative of perpetual and inevitable improvement.

I am not an expert in AI as a technology. For that reason, I would not describe myself as an advocate or am I a skeptic. I am, at best, AI curious—not only of what it is, how it works, and what it can and can’t do (Narayanan and Kapoor 2024), but also of how it is talked about and mobilized in the industry and public discourse.

On this last point, what’s clear is that Intelligence advocates promise a sort of omnipresence and omnipotence in managerial terms: efficiency, predictability, control, and optimization at an unprecedented scale in every aspect of our lives. Reflecting on this discourse of hype, I see that this imagery often verges on the theological. The advocates predominantly present GenAI as if it were a soteriological phenomenon, here to save us from uncertainty and waste—of time, resources, and even human fallibility. Simultaneously, however, skeptics remind us that AI serves as a mechanism for the extraction and exploitation of data, ecological resources, artistic and intellectual value, with very little—speaking generously—concern for consent, ethics, and responsibility.

From an anthropological perspective, this picture seems neither new nor surprising. GenAI is part of a long lineage of technological tools designed to extend human capacities—for instance, language, writing, navigation, the printing press, the Internet—each once heralded as an evolutionary leap (Ferraris 2024). Both the vision and the caution for GenAI have been there for much longer than the current hype: Think of HAL 9000 in 2001: A Space Odyssey, for instance: a machine intelligence imagined as the next step in human evolution, but also as a figure of control, opacity, and eventual betrayal. So, the current moment of hype and fascination is not only a result of the technological artefact itself, but also of discourses that have been surrounding it for a long time: a universalizing and future-oriented (“it will only get better”) logic that frames Intelligence as the only viable, inevitable, and even morally necessary technological trajectory for the advancement and improvement of the well-being of humankind.

I want to point out that this is the same narrative arc that animated the civilizing mission of modernity (Goody 2006; Ortner 1984; Sahlins 1972; Wolf 1982) in the 19th century or of international development (a.k.a. neoliberalism) ([b@525397; @525395 p. Li 2007], Rist 1997; Tsing 2004) in our lifetimes—both claiming to improve the human condition while advancing particular linear visions of the world, resulting in or restoring the world dominance of the West through the exploitation, dispossession, and dependency of the rest.

Marshall Sahlins famously unsettled the Western myth of linear progress in The Original Affluent Society (1972), provocatively demonstrating what would happen if “affluence” were to be measured not by material production and accumulation, but by the absence of hunger and the abundance of leisure time in a society. Measured this way, the so-called “primitive” and “backwards” societies would appear more affluent, and indeed, much more progressed, than “modern” industrial ones. Let’s do a similar thought exercise and flip the script written around Intelligence today: what if we measured it not by the accumulation of data, the accuracy of prediction, or the speed of decision-making, but by the absence of hunger, starvation, and genocides, the distribution of leisure time, or the depth of understanding or the quality of relationships between people, or between humans and the environment? Would we still come off as more intelligent than ever before?

The problem beyond the hype, then, is not so simply coming up with a definition of intelligence. If we attempted it, we might find ourselves oscillating between two poles: one that equates intelligence with prediction and control, and another that grounds it in interpretive richness, situated sense-making, and the ability to navigate the complexity of life.

Here, I don’t use the word complexity lightly, nor as a mere synonym for complicated. The two are not the same. Anthropologists often remind us that the whole is more than the sum of the parts.[2] It is a way of pointing to this distinction: that while the complicated can be broken down into constituent pieces, the complex exceeds such reduction. Complexity lies in the emergent qualities of relations themselves—qualities that cannot be fully understood, let alone governed, by examining the pieces in isolation. It is this kind of complexity that human intelligence navigates daily, and it is this those predictive infrastructures struggle to recognize.

And yet, within the current industrial imagination, complexity is routinely collapsed and reduced into the complicated so that it can be rendered governable. This is not accidental; it is precisely what gives rise to the authority of Intelligence—not as a neutral tool, but as a regime of truth in the Foucauldian sense, a way of organizing knowledge that makes certain practices appear inevitable and universally desirable while obscuring the assumptions and costs they impose. So, the urgent task, I believe, is to expose the logic of truth-making that underpins this industrial imagination of Intelligence (Foucault 1978)—an imagination that rarely concerns itself with, and often intentionally displaces, what other forms of intelligence it silences, or who benefits from its dominance, and at what cost.

Seen that way, GenAI is far from being a neutral tool like any other that came before it. On the contrary, it is a cultural artefact embedded in—and productive of—a particular set of social structures, power relations, knowledge, and market logics. As Marx and Engels reminded us in The German Ideology (1970, 64), “the ideas of the ruling class are in every epoch the ruling ideas (…).” In this light, GenAI as an artefact—and the broader logic of Intelligence it underwrites—operates as a techno-governance instrument (Bruun 2024) of the current techno-oligarchic class (Morozov 2025), cast not merely as a promising innovation but as an economic and even moral obligation for competitive survival. And, like any dominant paradigm, it functions as a perceptual threshold (Ahmann 2018): its logic becomes naturalized, its assumptions rendered invisible, while alternative forms of intelligence are obscured, delegitimized, or simply erased as valid forms of dwelling in and navigating life.

This naturalization is precisely what makes Intelligence so powerful—and so difficult to contest. To unsettle it, we must first trace how it travels, unfolds, and consolidates within technological, managerial, and everyday terrains.

Crossing the Threshold: The Sales Intelligence

This perceptual threshold that naturalizes Intelligence came into sharp vision for me during the strategic design research project, which I touched on in the introduction. What was at stake was far more than a routine CRM update. Our client, a multinational energy company, was undergoing a significant shift in market and business strategy—from selling discrete energy products through separate product-based verticals to offering integrated energy solutions across the board, both to its B2B and B2C clients. This transition required not just a reorganization of its internal structures from a vertical to a transversal sales model but also a redesign and alignment of customer, partner, and employee experiences accordingly.

As the external consultancy team—a service designer, two researchers, and a UX designer—our project scope was the B2B side of the business: we were to conduct field research with the company’s sales agents, partners, and B2B clients with a two-fold objective: (1) to understand the current experiences, needs, and workflows of all three groups to produce “as-is” and “to-be” service journeys, and (2) to redesign the B2B client platform accordingly. Our primary stakeholders were the B2B business managers. While the company did have an internal research and service design team that oriented us, their remit was strictly B2C. Nobody, it seemed, had ever set foot in the B2B world—at least, that’s what we thought at the time.

It was only in the kickoff meeting that we learned other work was already underway on one of the centerpieces of this strategic market and business shift: the company’s CRM software, the everyday tool of sales agents and partners. The business team cast it as the linchpin of a new, horizontal B2B sales model—a vision that, on paper, made perfect sense. A separate team of designers and developers—also external consultants—had been brought in to refine a pilot interface of the CRM, equipped with AI-driven capabilities that aligned with the new transversal sales strategy, their work unfolding in a parallel design sprint alongside our field research. The sprint’s goal was to refine it, add further Intelligent features, and roll it out across all sales verticals in B2B, so that every B2B sales agent—not just the select ones in the pilot—would use it. The pitch was simple: use customer data and algorithmic insights to spot cross- and upselling opportunities in line with the new business strategy, streamline sales, and make the whole process “smarter” and “faster.”

Any qualitative researcher would raise an eyebrow: how do you redesign a CRM without first understanding the needs and pain points of the people meant to use it? But that wasn’t the business team’s concern. Their starting point for this parallel track of work was their own frustration: The select B2B sales agents who had access to the pilot version were not making use of this new Intelligent version of the CRM as much as the business team thought they would, or should. What they wanted from our research wasn’t a diagnosis of why, but a prescription for how to get sales agents to use it more, especially now that it was about to be sprinkled with further “Sales Intelligence” features.

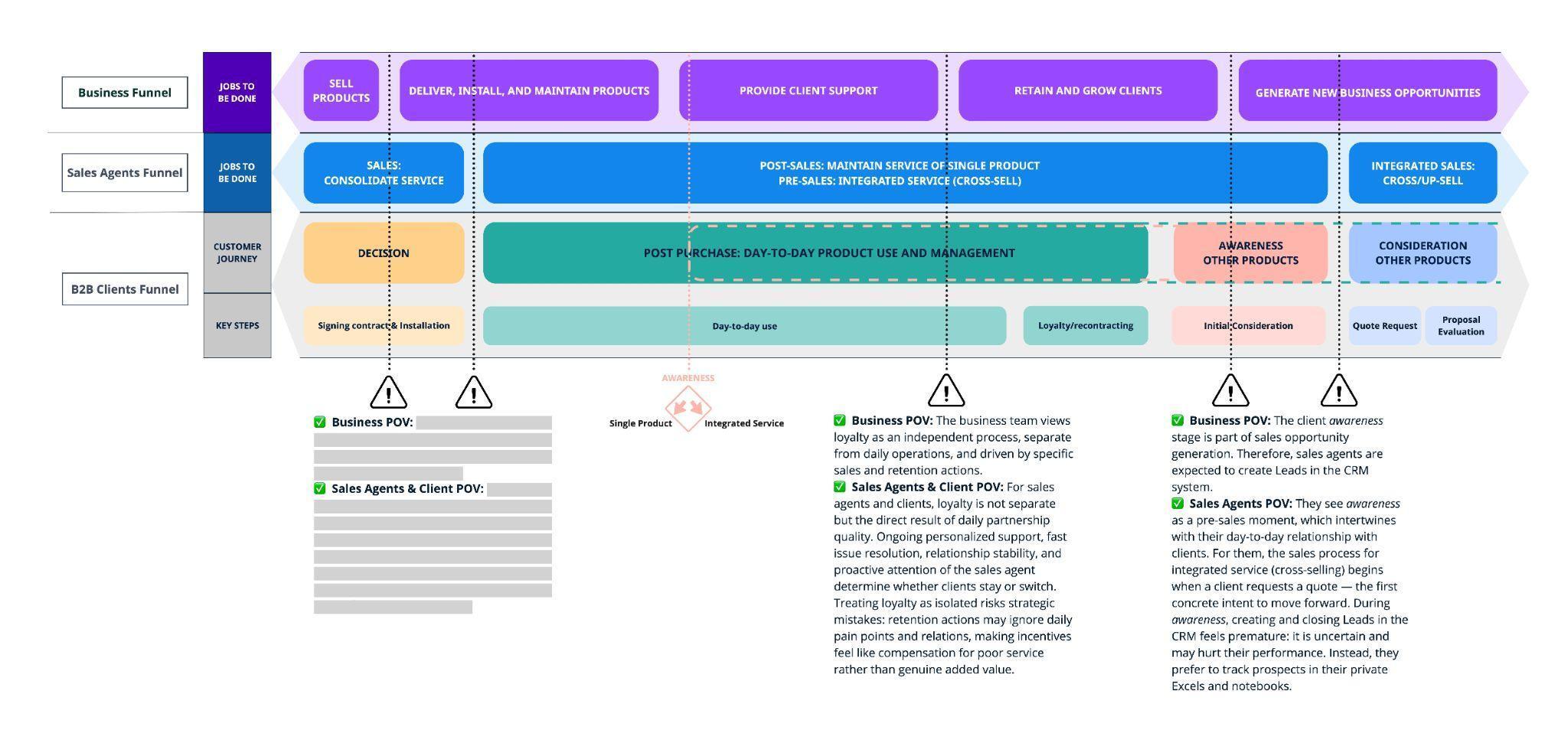

The CRM design sprint was anchored in a business Jobs-To-Be-Done funnel meant to drive business development and align sales and marketing with the company’s new strategy in a top-down fashion.[3] The funnel itself had been sketched out in a co-design workshop with business heads from different verticals without any input from the rank-and-file sales agents, where “Sales Intelligence” was treated as a foregone conclusion. The rest of the story, as the reader might guess, is about how that tidy vision met the messy realities of selling.

Working within these unusual constraints, we interviewed sales agents from different verticals, some with access to the pilot CRM interface and others without, and structured our conversations with them in two parts: first, exploring their use of the CRM—part unstructured usability test, part open conversation—and second, their day-to-day experience as sales agents. In principle, they all spoke well of the CRM, echoing the company’s talking points about its ability to save time, track sales, and surface promising leads. This didn’t come to us as a surprise. They’d been recruited internally via an internal email from a senior executive “asking” them to make themselves available to talk to researchers about the new company strategy. It was hard to imagine they’d openly criticize a company tool envisioned as the brain of the new business strategy to outsiders like us, hired by their bosses. Indeed, many framed their frustrations as personal shortcomings—“Maybe I don’t know how to use it” or “I probably just haven’t had the right training”—rather than as problems with the tool itself.

Yet, as our conversations shifted from their tools to how they secured and maintained clients, a different picture came into view: Their work ran on slow, relational rhythms and care work: inviting clients for coffee, remembering the names of their children or pets, stepping in to solve problems well beyond their work’s remit (“Oh, you need a mechanical engineer? I know someone”), or simply waiting for the right moment (“You mentioned a few months ago your electricity contract was up for renewal—shall we check your consumption now and see if we can get you a better deal?”). Securing deals often took months, sometimes years, moving through an unpredictable choreography of multiple visits, favors, attempts, and follow-ups. The details that mattered most—being attentive to fleeting remarks, remembering big or small shifts in a client’s personal or professional circumstances, working with tacit understandings of personalities and needs built over time—rarely made it into the CRM, nor were they accounted for in its “Intelligent” features. Instead, they lived in private notebooks, simple Excel sheets, and, most of all, in the sales agents’ own memory and relationships—primitive forms of intelligence, perhaps, recalling Sahlins, but exquisitely attuned to their context.

In contrast, the CRM worked off what sales agents considered “hard data”: a client’s size, sector, current products, and years as a customer. Working with this data, it would automatically generate leads—i.e., clients in sales agents’ accounts to whom they should attempt to cross-sell, or prompt them to hit-this-button-to-send-a-given-pre-scripted-marketing-email-to-client-A-about-product-X because the algorithm would conclude that client A would likely be interested in product X. But sales agents, regardless of their vertical, agreed this kind of data rarely dictated the right next step. Conversations we later had with B2B clients only reinforced their point: cold marketing emails—especially when triggered by the CRM—often backfired and made them feel like “just another customer,” if they were opened at all. Clients, indeed, highly valued the personalized attention of their sales agents. Having a sales agent with whom they had a personal relationship and to whom they could turn whenever they had a problem, question, or request was what most B2B customers were willing to pay extra for.

The CRM’s predictive logic also operated on a different temporality of meaning. The cross-selling leads and opportunities it identified were defined in the present tense: if, for instance, a sales agent attempted to cross-sell to a lead that the system had identified and “failed,” the lead had to be marked as “Closed”—i.e., not won—in the system. These outcomes fed directly into sales agents’ performance evaluations via KPIs such as lead conversion rates. However, an attempt that didn’t end in lead conversion—today’s “failure” in the system’s terms—was often a deliberate pause to build trust and a prerequisite for tomorrow’s success in the sales agents’ terms. The reverse was true as well. “Wasting time” on a client that the CRM had already written off became a performance risk.

In other words, what the system registered as failure or inactivity, the sales agent perceived as latent potential. One operated in a world of leads and probabilities; the other in a world of obligations and rhythms. These differences were critical because they marked the edges of Intelligence’s epistemic reach, showing that sales wasn’t just an algorithmic achievement but a relational one, sustained through time, attention, and often, uncertainty. In the absence of the recognition of this relational form of intelligence, the CRM became not a support, but a surveillance device. Unsurprisingly, sales agents began withholding certain interactions (“unsuccessful” cross-selling attempts, registering visits, sharing their notes) from the CRM to avoid being penalized for strategies and actions that didn’t align with or couldn’t be justified against its algorithmic logic or metrics.

This echoes what Bruun (2024) describes in her study of algorithmic governance: the shift from technology as augmentation to technology as enforcement. Once embedded in workflows, AI systems often foreclose other forms of knowledge and interpretation not by force, but by subtly delegitimizing them—an act of translation that flattens the distinction between the complex (irreducible, relational, emergent) and the complicated (reducible, solvable with enough rules). In the name of “Intelligence,” the CRM had transformed from a tool to an arbiter.

A quieter but equally important concern underpinned this selective data entry: if their relational knowledge were recorded in the CRM, their role could become redundant, the sales agents feared. Once personal networks, relationships, conversations, and accumulated trust were codified, their job would, in theory, be handed to another sales agent—or even to the machine itself. For some, the CRM was not only a performance monitor but a potential replacement, and a technology that pitted them against their fellow sales agents, whom they used to rely on for internal support. They were, indeed, not wrong, though it wasn’t the CRM itself that posed that immediate threat despite being the organizing logic behind it. The long-term objective behind the unified client platform our team had been tasked with designing was to digitalize client interactions and steer SMEs (Small and Medium-sized Enterprises) toward self-managing their accounts—removing the need for a dedicated sales agent. This platform would, of course, be fully integrated with the CRM to predict the client’s needs and likely next moves and feature a smart chatbot able to respond to client inquiries at any hour, offering a level of availability no human could match and quietly shifting the sales agent’s role from an indispensable intermediary to a relic of a bygone era.

Let’s return to the remark I quoted at the beginning—the one made by a business manager with a sales background during the design sprint review: “It looks good and impressive, except it’s all in a straight line. Our clients never move in straight lines, and neither do our sales agents.” It landed with force because it crossed the perceptual threshold of Intelligence, denaturalizing its logic and assumptions. For a moment, it was clear this wasn’t simply a matter of user mental models failing to align with system logic. It pointed instead to a fundamental disjuncture between two forms of intelligence: one optimized around algorithms trained on isolated, generalizable data that privileged prediction, control, and efficiency in the present tense; the other grounded in relational attunement to context, uncertainty, ambiguity, and possibilities unfolding over time. This disjuncture matters because it reveals how AI systems operationalize intelligence in a way that is categorical and closed, while human actors often operate in contexts that are relational and open-ended.

As powerful as the comment was, its effect, however, was short-lived. The head of the sprint quickly replied, “For that reason, the sales agents have to use and engage with the CRM more for it to work powerfully”—as if to close the very threshold that had just opened. Here you can see, in miniature, the micro-mechanics of universalizing truth-making that underwrite the current industrial vision of Intelligence: a dominant truth (in this case, the CRM’s Sales Intelligence) is asserted, and organizational reality is re-ordered around it—how to determine leads, how to measure performance, etc.—while the responsibility for its potential failure is externalized. The blame is displaced onto sales agents for being “disengaged” or “inefficient,” thereby recasting them as the problem to be solved and denying them any reason or legitimacy (or intelligence), and in doing so, reinforcing the very truth that was presupposed.

If we extend this logic beyond the CRM, we can see it in today’s obsession with “prompt engineering.” When a large language model produces a flawed output, the responsibility is predominantly framed as a problem of user input—one simply needs to learn how to give better prompts—rather than as a limitation of the model or the conditions of its training. The implicit message is that the system cannot be wrong; it is we who must retrain ourselves to match its potential. Just like in the case of the CRM, the dominant logic asserts itself as truth, while any signs of deviation or friction are treated as external errors.

Returning to the CRM, if the crossing of the perceptual threshold (instances when the assumptions of Intelligence are briefly denaturalized and made visible) were not momentary but sustained, the sales agents’ reluctance to use the CRM actively could be read as an everyday form of resistance (Scott 1985) to the flattening of sales intelligence (with lower case “i”) into algorithms, and as a defense of the mesh of relationships, obligations, contingencies, and interpretations that no algorithm could fully predict or reproduce.

In saying this, I don’t mean to romanticize human intuition or reject technological assistance altogether, but to make visible the deeper tension that runs through many intelligence infrastructures—not just our client’s CRM. It is the epistemic tension between a world rendered legible through patterns, algorithms, and the automation and efficiency they prescribe and a world that must be inhabited, felt, and navigated in real time. What the system flags as failure, the sales agent experiences as due diligence. What the algorithm calls a dead end, the human sees as an open possibility. The question that follows, then, is how we might understand this disjuncture between two seemingly incompatible forms of intelligence—and what theoretical and conceptual tools might allow us to do so—beyond the bounds of this single case.

Worlds of Intelligence: Connecting the Dots or Following the Lines

Whenever I want to talk about the different ways in which things in the broadest sense—humans, machines, technologies, animals, nature, institutions, and what you have—hang together and play a role in shaping social situations, I often put up two images.

The first is probably well-known both among academics and practitioners in the industry: a network of connected dots (see Figure 1). It’s the sort of diagram we sketch in workshops in early stages of research for product or service development, often with Post-its: suppliers here, distributors there, customers somewhere in between, with arrows showing who talks to whom, what is dependent on what, or where clusters of activity or tight interdependences form. With this bird’s-eye view comes a certain satisfying sense of completeness—as if seeing it all at once also means we can grasp whatever problem brought us there, manage it, maybe even solve it. Theoretically, of course, the diagram could be expanded endlessly to include everything (as the common saying goes, a butterfly flapping its wings can cause a hurricane across the ocean!), but in practice, it works by giving us a bounded and containable picture of a social world that is anything but.

The second is a bit messier: not dots, but lines (see Figure 2). They loop, knot, fray, rejoin, split, and sometimes vanish, maybe to surface elsewhere. They move in more than two dimensions, threading through time as much as through space. The moment we try to freeze it, we miss the point. For that, unlike the first image, we can’t speak of this image as a diagram in the usual sense—it escapes the idea of a complete view. Instead, it offers the sensation of a world and things in motion, a weave of paths and trajectories where beginnings and endings are hard to pin down. The suggestion here is that the social world isn’t built from fixed but connected actors, but from lives and relationships unfolding, snagging, and tangling over time, in ways no bird’s-eye view could ever fully capture.

I offer these two images as shorthand—metaphors, or perhaps provocations—to make sense of the disjuncture between the seemingly incompatible forms of intelligence. The first image, a network map of discrete actors and fixed ties, stands in for Actor–Network Theory (ANT), which treats entities and the links between them as the building blocks of social reality. The second, a weave of lines in flow and motion, evokes what Tim Ingold calls the meshwork, where social life is understood as movement along entangled paths rather than fixed connections between dots. Both inhabit the ways organizations attempt to deploy and design for intelligence—often without realizing they are, which one they are enacting, or why.

From Epistemologies to Ontologies

Up until this point, I’ve been talking about intelligence as if they were epistemic regimes—different, and often competing ways of knowing and enacting knowledge, such as the Intelligence as prediction and control and intelligence as situated attunement.

In broad strokes, an epistemic regime of intelligence is the knowledge–power infrastructure that:

-

Defines “intelligence” in ways that fit certain values: whether those values are efficiency and prediction, responsiveness and care, or something else entirely.

-

Codifies methods and metrics: elevating certain tools (statistical modelling, machine learning, ethnographic observation, community mapping, etc.) as legitimate.

-

Institutionalizes expertise: recognizing some actors or systems as authoritative “knowers” (AI models, data scientists, sales agents, community elders, etc.).

-

Bounds purpose and direction: orienting intelligence toward specific ends, whether that’s optimization, competitive advantage, accumulation of capital, social equity, ethical presence, or ecological balance.

In doing so, regimes often delimit the field of possibility: legitimizing some ways of knowing and enacting that knowledge may render others marginal, anecdotal, or even unintelligible. This is not simply an epistemic effect but, as I argued earlier about truth-making, a political and ontological one—shaping not only ways of knowing, but what can be known, what is worth knowing, by whom, and who has the right to define, act on, or transform the social reality that ensues in the first place.

So, if epistemic regimes tell us how to know, ontologies tell us what there is to be known. And if we don’t examine the latter, we risk treating disjunctures between epistemic regimes as mere misunderstandings—or, as I put it in the CRM case, a matter of “user mental models” failing to align with “system logic”—when in fact they are rooted in different visions of social reality itself.

That is why I find ANT and meshwork useful to think with, not as definite answers but as theoretical tools or provocative frameworks. They allow us to expand to the ontological, to include the assumptions about what the world is that underpin these epistemic regimes. This expansion matters precisely because epistemic regimes don’t float free; they grow out of, and in turn reinforce, particular ontologies: visions of how we structure social reality and how its parts relate. And it allows us to see why predictive, scalable Intelligence gains traction so easily in industry: it aligns with an ontology that is already legible to managers, investors, and algorithms alike. By the same token, more situated, relational intelligences tend to be cast as slow, inefficient, or even obstructive—not because they fail in practice, but because they trouble the neat, governable world that Intelligence seeks to secure.

Actor-Network Theory: What the Dots Can (and Can’t) Show Us

What also struck me in the story of the CRM wasn’t just the undeniable gap between human and machine judgment, but the sales diagram that was used to structure the design sprint. It depicted the entire sales process under the new company strategy as a neat ecosystem: discrete actors (clients, leads, sales agents, ABM, channels, Marketing clouds, CRM nodes, product verticals) connected by arrows, inputs feeding into NBAs (Next Best Actions), single causes leading to single effects. It was a classic systems diagram, a holdover from operations research, information design, and what Bruno Latour (2005) and others helped popularize as Actor-Network Theory (ANT).

Developed in the 1980s within Science and Technology Studies (STS), ANT challenged earlier socio-anthropological theories by insisting that the human and the technical can be studied in the same analytical frame (Callon 1986, 2001; Latour 1987, 2005; Law 1987). Its proposition was simple but radical at the time: that the social is not just made up of people. Technologies, institutions, organisms, documents, roads… these are not just mediators of social life but actors too with agency, and their agency matters as much as that of any human in shaping what happens.

Before ANT, much of sociology (perhaps with the exception of the Marxist thread) treated the material world as a static backdrop. ANT insisted that the material is in the action. A door hinge can invite or prevent entry; a laboratory instrument can decide what counts as valid data; a speed bump can enforce a law as effectively as a police officer.

This is the background to what is now underwritten and second nature in corporate and service design, for instance. The systems thinking or ecosystem maps that consider humans and non-humans on a shared plane of actors and tracing their connections could be considered as industry applications of ANT. The idea that a printer jam or a billing API could hurt a service experience or a person is pure ANT logic.

But the neat diagrammatic imaginary that ANT has offered hides as much as it reveals:

-

Fixed in time and space: An ANT diagram or ecosystem map captures a moment. By establishing networks of relationships, it also freezes them as if they were stable, even when they are provisional, contested, and always in motion. This can make a network feel inevitable, giving the impression that this is how things naturally are, rather than contingent.

-

Hides asymmetries: ANT, and systems design at large, with another strand of roots in mid-20th century industrial process modeling and cybernetics (see Meadows 2008; Forrester 1961), treats all actors symmetrically, which can make unequal relationships look even, obscure power, and, ultimately, embed these asymmetries into the very technologies they give rise to. On the sales diagram, for instance, the sales agents, the clients, the marketing cloud, and the CRM all appeared the same as actors in a network without any indication as to who sets the terms of engagement, who is accountable to whom, or whose priorities the network is ultimately designed to serve, and under what conditions (formal, informal, of cooperation or coercion to name a few).

-

Diffuses responsibility: When agency is everywhere, accountability can be nowhere. In ANT diagrams, “the network,” as a totality that produces the outcome, has the effect of transforming responsibility from something concrete, assignable, and open to negotiation into something abstract, omnipresent, and impersonal. Responsibility doesn’t vanish, but coupled with the hidden asymmetries, it becomes diffused and easier to externalize, foreclosing a transparent process of responsibilization (Geschiere 2023). This is not just a practical issue but an ethical one. Recall how users are blamed for not “prompting” well enough when an LLM produces a flawed output, just as sales agents were accused of being disengaged or inefficient when the CRM failed to deliver.

-

Failure as failure: In ANT terms, a broken connection or a system flaw is considered something to be repaired or replaced. Failure is a disruption to be corrected, not as a possible signal that the system itself might be harmful, unnecessary, or in need of reimagining. In industry contexts, this logic can mean patching and optimizing systems that might be misaligned with their stated goals, rather than opening space to rethink the system’s purpose or design from the ground up, perpetuated by the maxim of “fail fast, fix fast.”

Taken together, these features make ANT a framework through which to make sense of Intelligence (with capital “I”), oriented toward prediction and control. It rests on an ontology of the social world as made up of discrete entities linked through distinct traceable relations. If these entities and their connections can be mapped, the system can, in principle, be modelled and its behavior anticipated. The very fact that this picture omits ambiguity, temporality, and ethics is part of its appeal and popularity within the industry—especially for those who need systems to be both predictable and governable.

Meshwork: The Lines of Becoming

Tim Ingold’s meshwork begins from a different starting point. The social world, in this view, isn’t a set of discrete entities linked together, but a tangle of lines in motion—paths of growth, movement, attention, correspondence like roots threading through soil (Ingold 2000, 2011, 2017; Klenk 2018; De Munter and Salvucci 2024).

Where ANT gives us a 2D snapshot, meshwork thinking offers something closer to a long exposure in 3D—a record of trajectories, crossings, and knots over time. And where ANT flattens all actors into a single plane, meshwork thinking aims to bring their histories, possible futures, and vulnerabilities into view. It does not treat connections as fixed, but as continually made and remade in motion. Ingold, indeed, warns against the everything-is-connected-to-everything cliché, claiming that it sounds profound but erases the specificity of real relationships. Instead, in meshwork, the focus is not on connections—their presence or absence—or distinct entities but on their shifting relations and correspondence—an orientation to life that does not seek to manage from above, but to relate from within (De Munter and Salvucci 2024): the ways lines run alongside each other, knot, diverge, fray, emphasizing movement, interaction, improvisation, attentiveness, and adaptation. Responsive ethics, therefore, is built into this framework: the so-called inefficiencies—a pause before sending a proposal, a detour into a side conversation, the decision to wait until a client is ready, or a gut feeling—are not noise in the system; they are its fundamental aspects (Govia 2020).

Let’s return to my earlier critiques of ANT and reconsider how its limitations may transform into affordances through a meshwork lens:

-

Fixed in time and space → Always in motion: Instead of freezing relationships into a snapshot, meshwork thinks of them as trajectories, crossings, and knots that may shift, disappear, reappear, or reform over time, keeping us alert to emerging possibilities, shifts in influence, and changes in meaning that a snapshot might miss or erase.

-

Hides asymmetry → Embeds asymmetry: In ANT, the act of turning everything into “actors” is already a levelling move; in meshwork, the starting premise is that correspondences are always shaped by power asymmetries, even if subtle, and those asymmetries are part of what sets them in motion, not an afterthought.

-

Diffuses responsibility → Embeds responsibility: To move with, adjust to, and respond to others is already taking responsibility—for sustaining the relationship, for noticing when something is off, and for acting to keep the correspondence alive. That responsibility is lived in motion, and because motion leaves a trace in actions, reactions, and adjustments, it can be followed by those involved and by others. This keeps both responsibility and accountability open to contestation, rather than dissolving them into the abstraction of “the network” or externalizing them.

-

Failure as failure → Failure as diagnosis: Failure in meshwork also shifts meaning. It might be a bend, a pause, a change in the rhythm—a sign that the correspondence itself is changing. It is a moment of diagnosis, making visible aspects of the relationship or systemic assumptions that condition the former. In this sense, failures can function as crossing points of perceptual thresholds—a moment when underlying tensions, unspoken priorities, or multiple visions come into view.

These contrasts tell us that meshwork is not about replacing or rejecting ANT; it is a shift in vantage point—a different vision of how social reality is structured and how its parts relate. Both theories insist that the technical and the social emerge together, but where ANT often seeks to map and stabilize, meshwork attends to the world’s ongoingness and complexity, and its continuous remake in practice.

In our applied work, these two ontologies often interleave. Most ethnographers (me included) move between them—using ANT’s mapping to get a sense of the general fabric of a system, then shifting into meshwork’s register to follow how it is inhabited, bent, or sustained in the ever-unfolding lived experience of those who make part of it. Let me offer a couple of quick examples to show how these vantage points diverge in practice, and how switching between them can surface dimensions of meaning and insights that would remain invisible if we stayed in just one.

Working on a hospital’s service delivery, an ANT lens might begin by mapping the system: clinicians, lab workers, lab results, imaging systems, operating theatres, billing and insurance, and the electronic health record (EHR) system as the central node linking them all. The aim here would be to trace the connections and dependencies so they can be optimized for smoother, faster, more reliable patient care—and everything else would be expected to conform to that “to be” service flow.

Shifting into the meshwork’s register, other aspects come into view. A nurse’s slow work of building rapport with a patient’s family. The way the patient information is registered in the EHR shapes therapeutic relationships and even patients’ sense of self, and leads to side conversations between the patient and the caregiver to make patients feel like they’re more than just their condition (see Craig 2012). A clinician’s decision to delay updating a lab result in the system to allow time for clarification on an anomaly. These moments of attunement and adaptation wouldn’t be “outside” the service delivery or “bottlenecks” to it; they run alongside it, sometimes in tension, and sometimes reinforcing it, as integral aspects of patient care. Switching back and forth between these registers—the stabilizing map and the lived lines—makes visible how service delivery in a hospital can’t be reduced to an “efficient” flow or the data captured in EHR, even if the system remains indispensable for coordinating work.

Or think of traffic management. An ANT view might chart the system through its key nodes and connections: traffic lights, road sensors, control rooms, pedestrian crossings, driver behaviour, and centralized algorithms that optimize signal timing to maintain a steady flow of urban mobility. The aim is to keep the system legible and controllable, adjusting its parts to conform to a model of efficiency and safety.

From a meshwork perspective, the picture shifts. A moving truck might block a street. A cyclist might slow down the traffic. Temporary roadworks may lead pedestrians to carve new, informal routes along sidewalks or through parks. Over time, these informal routes—desire paths—etch themselves into their everyday routine, reflecting informal patterns of care, urgency, or avoidance that are responsive to their environment, social obligations, and risk perception. These paths are not noise in the system; they are part of how mobility is lived and negotiated. Moving between ANT and meshwork would make visible how mobility systems are not only governed by centrally managed flows, but also continually remade by the shifting dynamics of everyday life.

From Intelligence to intelligence(s)

If we circle back to the CRM case and examine it through these theoretical frameworks, the disjuncture between the business and sales teams over sales intelligence wasn’t just a disagreement over methods, over how to know and act (bounded transactions versus contingent, ethically charged navigations), but what there was to be known in the first place, rooting these two forms of intelligence in an ontological difference.

From the business side, sales intelligence, which was crystallized in the CRM’s Intelligence, was envisioned and enacted as linear, transactional, and bounded. It meant a smarter ecosystem. Faster results. If some part of the system failed—say, a sales agent stagnated, or a lead didn’t convert, it was read as a faulty node, a missed .opportunity, or a fixable bottleneck. The system didn’t ask why the sales agent waited, or the client didn’t follow through. It simply flagged the events as data to be converted into performance metrics to feed future predictions.

That is not how intelligence worked in the everyday experience of the sales agents. From their perspective, intelligence was non-linear, relational, and deeply embedded in everyday circumstances and situated interpretations. It was not just a static asset to be captured in the CRM, but a living correspondence distributed across time—woven from ongoing exchanges with clients, colleagues, technologies, and market cues, using a mix of memory, gossip, gut, and cues. It was shaped by histories of trust, tacit understandings, moments of hesitation, and deliberate detours and delays to build and preserve relationships. So, what looked from the CRM’s vantage like inefficiency or disengagement—reluctance in updating records, side favors and conversations, coffee invitations, and time-consuming back-and-forths—were, in meshwork terms, the very movements that the system can never log into a vision of Intelligence but that, nonetheless, sustained the lines of business.

Let me point out two things here: First, the sales agents’ vision of intelligence was not simply in the sales agents; it emerged in the back-and-forth, the situated interpretations, improvisations, and adaptations that tied them into their clients’ worlds, and could not be stabilized without losing the qualities that made it effective. This matters because what was at stake was not just efficiency-as-output, but the very conditions under which the efficiency could really be achieved and sustained over time.

Second, the sales agents were not opposed to the CRM’s Intelligence; in fact, they valued it as a high-level pulse check within the volatile energy market, one that allowed them to compare clients in their portfolios and communicate with different stakeholders across the company. What they resisted against was its elevation into the sole lens for diagnosis or decision-making—a move that would flatten their world of correspondences into a single, bounded register. Their continued reliance on what looked like primitive methods of relationship management and record keeping—notebooks and Excels—was, whether consciously or not, an ethical stance. It was a way to protect themselves, their clients, and the business that kept each of them alive, and to preserve the plurality of intelligence(s).

Lessons for Practitioners: Ethnographic Refusal as Perennial Frontier

In the previous sections, I traced a disjuncture where performative intelligence—Intelligence—failed to account for living intelligence. I argued that it wasn’t just a matter of collapsing context into categories or simplifying complex relations into data points, but a deeper difference in how each imagined social reality—what there is to be known, what is worth knowing, and to what ends. I suggested ANT and meshwork as theoretical frameworks and conceptual tools for surfacing these deeper differences and the conditions under which certain forms of intelligence are elevated into universal and totalizing truths, while others are rendered redundant, if not invisible.

This section then asks what we, as designers, researchers, and practitioners, might do with such differences and conditions beyond just witnessing, documenting, or trying to reconcile them in small ways. Can we instead lean into them to reorient what we mean by intelligence altogether?

Troubled Meshworks and Ethnographic Refusal as Perennial Frontier

Working with Ingold’s theoretical propositions, De Munter and Salvucci (2024) introduce the idea of “troubled meshworks:” configurations of life that are not harmonious and corresponding but full of friction, discrepancies, ambiguities, and conflict. I believe it is an accurate concept to work with to make sense of the current state of our world. We live in times of troubled meshworks. Both generally speaking, within the framework of contemporary capitalism and the global ecological and humanitarian crises, and speaking of intelligence.

We’re in the midst of a paradigm shift that is troubled: As Stanger et al. (2024) show, Intelligence (with capital “I”) has already established itself as the highest form of knowing and acting and is shaping global futures not through brute force, but through quiet and insidious standardization (echoed daily in headlines announcing that ‘big tech is going all in on AI’). It normalizes certain ways of seeing, valuing, and acting. However, it is still not fully universal and totalizing. There are still enough voices—with or without the authority to speak. Enough eyebrows are still raised that question its practical and soteriological premises and messianic self-promotions; enough private and public discussions still unsettle its omnipotency (hence, the EPIC’25 conference), and, in my words, there are enough perceptual thresholds that are still open, can be crossed, or widened.

These are the thresholds where ethnography becomes indispensable. I don’t mean ethnography in the narrow sense of a method—the interview guide, the observation protocol, the fieldnotes—but in the broader sense of an analytical approach: a way of apprehending and engaging with social worlds that involves constant reflection. In this register, ethnography is not simply a toolkit for gathering data but becomes a stance, one that is, by definition, liminal. The ethnographer is never entirely inside or outside the world under study, looking at it and looking from within it at once. This liminal position, the suspension at the threshold, is what makes possible the work of interpretation of meanings and their translation across different worlds. That stance is what makes us insist that context is never a backdrop to living and dwelling in life or an input in the pipeline, but is constitutive of it (Seaver 2015) and refuse to smooth over complexities or collapse them into a single explanatory logic.

That is why the ethnographic approach matters even more than before in times of troubled meshworks. Here, refusal is not another word for resistance. As Simpson (2014) reminds us, resistance presumes the authority of what it opposes, while refusal declines that presumption. In that, refusal is an ethnographic stance, but also a political and ethical one that rejects hierarchical positioning between different worldviews, allowing for imagining alternative configurations of their relationship altogether. Said differently, practicing ethnography in times of troubled meshworks is therefore a practice of refusal: refusing to translate life entirely into dominant categories or algorithms, refusing to let one form of intelligence overwrite all others, and refusing premature closures of alternative futures.

This embedded refusal in our practice is what makes us dwell in the perennial frontiers of conversations about what intelligence is and should serve to. I borrow the term from anthropologist Paul Little (2001) who coined it to describe Amazonia not as a single frontier that opens and closes, but as a mosaic of recurring, contested zones shaped by overlapping histories and cycles of extraction and reinvention, which I find to bear a metaphorical resemblance and power to situate ourselves in the ongoing, unsettled discussions and negotiations over intelligence(s). Just like in Amazonia, the perennial fronts of the industry are the enduring zones of encounter where divergent logics and priorities meet. Unlike perceptual thresholds, which mark ephemeral moments of recognition or rupture, perennial frontiers are not episodic or fleeting. They are structural and ongoing—multiple, and recurring spaces we occupy at the margins across consulting, product, strategy, or design, or what have you, where competing regimes of intelligence—algorithmic, relational, financial, experiential, situated, embedded—confront and challenge each other in different ways, and each demanding fresh negotiations and struggles of legitimacy of our approach. Our presence also follows cycles of emergence and eclipse: recruited in moments of boom, laid off during crises, sidelined in efficiency drives, then reintroduced, sometimes under new labels, when user needs or human complexity inevitably reassert themselves. And, as in Little’s depiction of Amazonia, these frontiers are not blank margins but quilts of overlapping histories—stitched from anthropology, STS, systems and design thinking, corporate R&D, human-centered approaches, etc.

If a pessimistic view situates perennial frontiers at the edge of technological innovation, an optimistic one recognizes them as innovation’s blind spots—not margins, but apertures. The optimistic reading resonates with Munk, Knudsen, and Jacomy (2022), who argue for a shift from explanation to explication—a mode of ethnographic engagement that resists generalization and instead attends to the dense specificity of meaning as it unfolds. To this end, they argue that we need thick machines: machines that do not strive to process more data, but allow space for interpretation—drawing from Geertz’s (1973) notion of thick description, where the difference between a blink and a wink lies not in the movement of the eye (a twitch) but in the social context that gives it meaning. Such thick machines would surface contextual specificities rather than flatten them into generalizable outputs.

This idea was developed further in a recent whitepaper published by the initiative called Doing AI Differently, led by the Alan Turing Institute, University of Edinburgh, and the UK’s Arts & Humanities Research Council. It reads like a manifesto for “a fundamental shift in AI development – one that positions the humanities, arts and qualitative social sciences as integral, rather than supplemental, to technical innovation,” arguing that “interpretive depth [i]s essential to building AI systems capable of engaging meaningfully with cultural complexity – while recognising that no technical solution alone can resolve the challenges these systems face in diverse human contexts” (Hemment, Kommers, et al. 2025).

Making Machines Thick

This call for interpretive depth resonated directly with our own intervention in the strategic design project. In the CRM case, our consultancy team sought to embody precisely this shift: designing with an awareness that relational and situated forms of intelligence are not peripheral to technical systems, but central to their legitimacy and effectiveness. Concretely, some of the things we proposed were:

-

Accounting for relational temporalities. We proposed the introduction of pre-lead and in progress categories in following up cross-selling opportunities to capture the gray space between Won and Closed (not won), allowing sales agents to register and nurture emerging client relationships without being penalized by rigid, binary classifications. With this, we aimed to create room for slower and cyclical temporalities of trust-building, recognizing that not all sales interactions conformed to the CRM’s linear pipeline that read the world in present tense. Alongside this, we also suggested KPIs that were process- rather than output-oriented, valuing, for instance, not only lead conversion but also number of clients contacted and number of contacts to acknowledge the ongoing relational work sales agents perform.

-

Recognizing situated knowledge as a legitimate override. We advanced the idea that sales agents should be empowered to overrule CRM recommendations that made sense in predictive terms but clashed with contextual realities and were socially tone-deaf—for instance, skipping a suggested cross-selling call immediately after an invoice dispute, or declining to send a marketing email about machine oils when the sales agent knew the client’s family owned a competing franchise. Crucially, such discretionary choices were to feed back into the algorithm as a source of interpretive learning rather than being treated as error or random choice, instead of being penalized by performance metrics.

-

Pluralizing next best actions. Instead of collapsing decision-making into a single NBA (Next Best Action), we suggested offering multiple alternatives, each with its own rationale—for example:

-

Option A: Call now (high probability of closing based on past behavior).

-

Option B: Wait until next billing cycle (aligns with client cash flow rhythms that are non-standard).

-

Option C: Send a low-pressure check-in message (maintains relationship warmth despite low immediate conversion chance)

-

This design acknowledged that multiple intelligences—algorithmic, circumstantial, relational—coexist, and that sales agents must exercise judgment in choosing among them.

Are Thick Machines Enough?

But is dotting algorithms with interpretive depth enough? I don’t think it is. Nor is it ever fully possible, because the force of interpretive depth rests on partiality, presence, and the situated negotiation of meanings that can never be fully pre-coded. So, what else can we do?

Let’s return to the case: Our consultancy team’s involvement in our client’s B2B world was never about optimizing the CRM for greater accuracy only. It was about reframing the business strategy itself. Our central recommendation was one that reoriented the company’s strategic lens—shifting the business model from a product-centered one to a service-centered one that recognized the intangible, and not always easily quantifiable or measurable, power of the correspondences between sales agents and clients (see Figure 3).

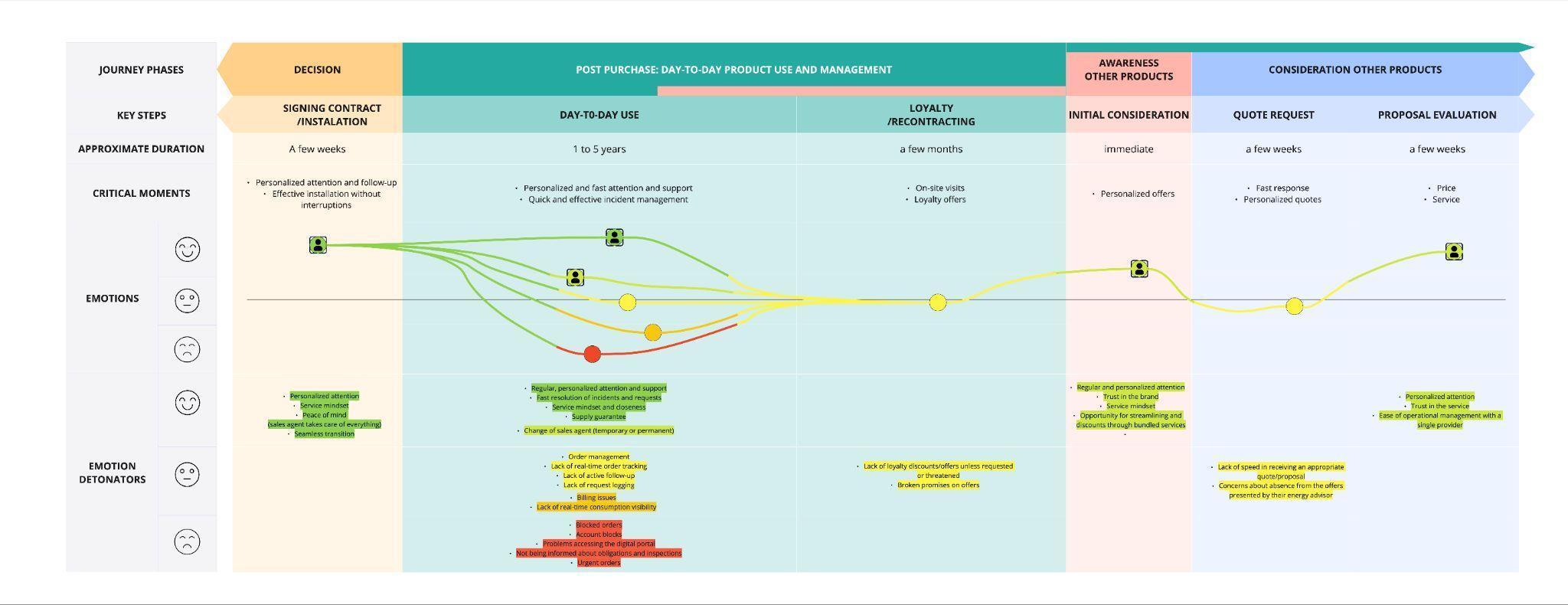

We arrived at this recommendation by tracing the correspondences that wove together the everyday world of sales. What determined client experience, we found, was not only the product quality or price but also the service, which proved just as consequential, if not more, for client satisfaction, loyalty, and willingness to purchase other products. So, the segmentation schema the business team and the CRM relied upon, which sorted clients neatly by size, sector, and product, fit cleanly into algorithmic pipelines but overlooked a third and equally decisive factor: the ongoing relational and intangible labor of the sales agents that made a value difference for customers. Ethnographic inquiry revealed that, regardless of size, sector, or product, all clients fell into one of three relational archetypes:

-

Independent: clients who saw their sales agent as a resolutor and sought their presence only when problems or incidents arose.

-

Pragmatic: clients who valued the sales agent’s support for placing orders, making choices, and resolving issues, but did not depend on them constantly.

-

Needy: clients who treated the sales agent as a business partner, turning to them not only for orders and problem-solving but also for interpreting market cues, negotiating prices, and making strategic decisions.

While the degree of correspondence varied, the constant across all three was the expectation of having a human being by their side, via calls, visits, and informal exchanges.

One implication of this finding was in the imagined transition to digital client management. Business managers assumed that SMEs (Small and Medium-sized Enterprises) would be the best candidates to shift to self-managed smart digital platforms, given their high cost-to-serve. Yet in practice, it was the largest accounts that were most capable of becoming digital-first. Their scale meant a division of labor that allowed operational tasks to be delegated, making digitalization more feasible. SMEs, by contrast, often combined strategic and operational responsibilities in the same people, creating workload pressures and less specialization. Precisely because they lacked time and capacity to resolve issues independently, they were the most dependent on the sales agent—even as the cost-to-serve made them appear the most “efficient” candidates for digital automation.

Another implication was in how business growth itself was imagined. The business team envisioned a linear and task-based only pipeline, where awareness led smoothly to opportunities, sales, delivery, support, retention, and cross-selling. This model fit the predictive intelligence embedded in the CRM—categorical, sequential, and measurable. But what we found in practice was far messier. Juxtaposing the business Jobs-To-Be-Done with the experience journeys of sales agents and clients, we revealed multiple points of friction, where linear and job-based categories broke down, converged, or became indistinguishable from one another once lived temporalities and correspondences were accounted for. These divergences forced a reconsideration of the CRM’s core functionalities, shifting attention from linear prediction to the more entangled rhythms of service relations (see Figure 4).

We complemented this analysis with a further focus on B2B client journeys. We showed that what the predictive intelligence coded as a single “moment” could in reality span up to five years, with shifting, multiple, and non-mutually exclusive emotional registers, needs, and frustrations across that period. Crucially, what restored client satisfaction at each key juncture was not algorithmic nudges or digital solutions but the timely and personalized intervention of the sales agent (see Figure 5).

Our work and resulting recommendations were, in this sense, an act of ethnographic refusal. We did not start from the presumption that predictive intelligence was the dominant form to which all others must be subordinated and adapted to. We did not content with repairing the system from within its own terms, but questioned those terms altogether. In other words, we did not accept the 2D diagrams (the business JTBD and the CRM’s systems map) of predictive categories we were given as the foundation and then try to make them richer or more “three-dimensional” with interpretive overlays. Instead, we began from the 3D world of correspondences—the relational meshwork in which sales took shape in practice. From there, we translated those correspondences back into an alternative process funnel and journeys, ones that reoriented business strategy and the CRM and client platform design—from 3D to 2D, not the other way around.

Practical Takeaways

What we carried out was less a technical intervention than a negotiation—an attempt to hold on to a way of working that felt ethical, effective, and meaningful, in the face of an infrastructure that no longer recognized it. From this negotiation come several lessons for practitioners about how to work with, around, and sometimes against predictive systems in order to foreground the forms of intelligence that matter in practice.

-

Start with the meshwork before building the ANT. Begin from lived correspondences and relational entanglements, and only then formalize networks and categories, rather than assuming the reverse.

-

Recognize service as intelligence. Treat the relational work of frontline staff and human relationships not as inefficiency to be automated but as a possible vital epistemic input into the system design.

-

Design for plural temporalities. Rather than treating experiences as a series of forward-moving stages, attend to the temporal rhythms that shape them—slow, recursive, continuous, and affective patterns that are just as important to design for as discrete and quantifiable touchpoints.

-

Make refusal shine in the process. Don’t accept the dominance of predictive intelligence as the baseline; reframe the problem so that alternative intelligences can shape the system from the outset.

-

Remember that “AI-driven” is not a value proposition in itself. Algorithms generate no value simply by existing or being deployed. Their value depends on their capacity to respond to the human judgments, cultural contexts, and relational practices into which they are woven, as does their predictive capacity.

-

Surface multiplicity, not singularity. Build systems that acknowledge competing interpretations, allowing judgment rather than collapsing complexity and ambiguity into one correct solution.

-

Evaluate systems in context. Move beyond quantitative KPIs: measure whether systems support ethical, situated, and relational ways of working, which are fundamental—not additional—pillars of the system.

-

Keep humans in the loop as sense-makers. Not as passive overseers or mere correctors but as active interpreters who shape how systems are used and what counts as knowledge.

Conclusion: The Fragility of Intelligence (with Capital “I”) and of Futures

Intelligence (with a capital I) might be faster, more efficient, and exponentially more capable in processing vast amounts of data or complicated patterns, and it may well be becoming the dominant paradigm in the world. But if there is one conclusion I want to leave the reader with, it is that Intelligence is fragile—because it cannot hold the partiality, presence, uncertainty, and negotiation of meanings on which life actually depends. It can deal with difficulty and computer what is complicated, but it cannot fully handle what is complex, nor fully inhabit complexity.

The CRM case made this visible. It showed what happens when a system built for prediction collides with a practice built on possibility. This was not just a clash of tools, but of temporalities, values, and intelligences. And the value of presenting it doesn’t lie in its singularity; on the contrary, its force lies in its generality. As organizations increasingly come to see AI not merely as a tool but as a model of governance—and as it proliferates across healthcare, education, labor, public services, and into our everyday lives—it doesn’t only increasingly shape organizational decisions in the industry but carries with it a worldview: that intelligence can be abstracted, standardized, scaled, and automated. Consequently, it carries, too, a unilinear and universally applicable vision of social life and the future, not only for businesses but for how we live together on this planet and what (is left) to live for.

Recognizing—and rendering visible—this fragility matters. So too does cultivating the conceptual, theoretical, and practical tools to refuse its dominance. Because the stakes are not merely operational or economic. They are ethical. They are political. They are environmental. When predictive infrastructures define what counts as intelligent action, they do not just define how decisions are made; they also decide what, and who, is worth attending to—downplaying consequences as mere externalities or prices to pay for (recall the reports of exploited clickworkers labeling data for pennies or the mounting evidence of AI’s staggering carbon footprint).

I want to end where I began, with the provocation that Marshall Sahlins once posed about progress, and ask it again of intelligence. What if we measured intelligence not by the accumulation of data, the accuracy of prediction, or the speed of decision-making, but by the absence of hunger, starvation, and genocides? By the distribution of leisure time? By the depth of understanding, or the quality of relationships between people, or between humans and the environment? Would we still appear more intelligent than ever before?

This is where ethnographic refusal takes its place as a perennial frontier: not chasing better predictions, but holding open the possibility that intelligence could be otherwise, and so could our futures. It is by practicing this refusal seriously that we can recognize not only the difference between Intelligence and intelligences, but the difference that difference makes.

About the Author

Sena Aydin Bergfalk is a product researcher and service designer based in Barcelona. Trained as a cultural anthropologist, she connects the dots and traces the lines between user needs, stakeholder demands, business goals, and technical possibilities to build the right product or service and build it right. She is currently working as a Lead Service Designer at Sngular.

Research Ethics

The case study presented in this paper was undertaken to better understand how organizational tools and everyday practices shape and inform business strategies, with the aim of generating insights for service and strategic design. The research posed minimal risk to participants, and steps were taken to reduce the possibility of harm: all participants were informed of the research aims and gave their consent to take part. Data were collected, processed, and stored in compliance with EU GDPR (General Data Protection Regulation) requirements. Identifying details have been anonymized to protect both individuals and the client organization, and access to raw data remains restricted to the research teams. Methods combined interviews, organizational research, and observations, ensuring both depth and validity through triangulation. Our approach followed the principles outlined in the American Anthropological Association’s (AAA) Statement on Ethics, with particular attention to transparency about the researchers’ positionality, respect for participants, and commitment to preventing harm.

Acknowledgments

I am deeply grateful to my research and service design teammates at Sngular, whose support and patience made this work possible. My thanks also to Kirsten Bruckbauer, our EPIC session lead, for her thoughtful guidance and encouragement, and to David Rheams, whose generous editorial eye and engaging conversations helped shape this paper into what it is.

A Customer Relationship Management (CRM) system is a software platform designed to centralize and standardize how organizations manage interactions with clients and prospects. CRMs are more than technical tools: they structure workflows, mediate relationships, and define what counts as relevant knowledge about clients. How a CRM is designed and adopted therefore has direct implications for user experience, both internally (for employees who must work with it) and externally (for clients whose interactions are mediated through it).

The phrase is originally attributed to Aristotle.

The Jobs-To-Be-Done (JTBD) framework explains customer behavior in terms of the “job” they are trying to accomplish, often represented as a funnel of sequential stages (awareness, consideration, decision, etc.). Unlike customer journey maps, which trace the lived sequence of touchpoints and experiences, JTBD tends to abstract these into functional “jobs” that the customer tries to do by way of employing a product or service (see Kalbach 2020). In this case, the JTBD funnel was applied to the company itself—breaking down internal “jobs” to structure the new market and business strategy.

.png)

.jpg)

.jpg)

.png)

.jpg)

.jpg)