Introduction

When I first arrived at the unassuming downtown Los Angeles office of StoryFile, almost every employee told me that I have to “go meet and talk to Sam.” That same day, two employees led me down a flight of stairs and into a darkened film studio. We settled in front of a ten-foot, dark box. With a few clicks of a button, the box illuminated and a wax-like, older gentleman appeared before me. ‘Mister Sam’ – as some employees affectionately called him – seemed to lean forward, his attentive gaze ready to meet mine.

Mister Sam is not a living human. He is an animated re-creation of Samuel Walton, the now-deceased founder of Walmart corporation. At the request of his family after his death, and in collaboration with his estate, archivists, and the employees at StoryFile, Sam Walton was “re-created” into Mister Sam.

I asked Mister Sam, “how are you today?” and he courteously replied in kind. I asked him about his past, his life and Walmart – and each time he had a response for me. Fully expecting our interaction to feel clunky and mechanical, I was struck by how engaging it felt. Mister Sam looked directly into my own eyes, his responses delivered as if for the first time. And yet – the moment ended abruptly: when I ran out of questions, I turned to the StoryFile employees, who simply shut down the hologram box, turned off the lights, and led me back upstairs.

This encounter described above – intimate, engaging, but also strangely staged – captures the terrain of this paper. It emerges from my time conducting a corporate ethnography with StoryFile, a company that pioneers ‘conversational video AI’ to preserve and reanimate personal video stories of real people for the benefit of future generations. StoryFile is one of many companies now using artificial intelligence (AI) to replicate, re-create, and represent people, living and deceased. Their work sits at the intersection of deeply embodied human experiences – memory, legacy, grief – and sociotechnical systems that promise to preserve and extend them.

As products like these become increasingly integrated into personal, commercial, and heritage contexts, it raises questions not only about how these systems technically work, but about the forms of intelligence used to produce and enact them. Technical systems are often imagined as if “intelligence” were a clean, self-contained property – logical, neutral and precise (Crawford 2025). Yet such perspectives minimize how these systems are deeply entangled with technical, social, and cultural systems and practices. At StoryFile, what goes into making this technology includes practices like the performances of people being recorded, the design choices that shape the interactions, cultural values, and corporate narratives. Locating these practices means recognizing that this technology is not simply a technical domain but deeply interdependent with other forms of ‘intelligence’ that are relational and distributed in practice.

To locate and examine these practices, I borrow Paul Dourish & Genevieve Bell’s (2014) myth/mess framework, which distinguishes between the aspirational narratives that drive AI development (myth), and the complex, often contradictory practices, decisions, and realities of its implementation (mess). Engaging with myths, or stories, that StoryFile employees create is critical to understanding the different imaginaries, discourses, and narratives that animate the employees at StoryFile and the things that they design and create. In turn, mess captures how narratives materialize through every-day decisions, practices, and technological affordances. Mess is not just error – it is lived sociotechnical reality, with compromises, workarounds, and negotiations.

Myth/mess is both a descriptive and analytical framework: it helps map how StoryFile’s makers narrate their technology into existence, and how those narratives meet the frictions of day-to-day design and production. Crucially, this framework also highlights how technologies like StoryFile are relational achievements. Intelligence here is multiple and distributed: it takes shape through employee decisions, the behavior of interview subjects, technical affordances that make interactions possible, and corporate narratives that frame the product’s meaning. What results is not simply myth nor mess, but a dynamic interplay of the two.

This paper examines two such myth/mess tensions:

-

Authenticity: the notion that this technology captures a person authentically, in their own words, versus the performative, technical, and curatorial work required to create the feeling of an authentic, real-time interaction.

-

Memory preservation: the promise of preserving and making accessible a whole person’s life story, versus the constraints of partial, mediated, and curated recordings through individual and corporate decisions.

In analyzing these tensions, this paper shows how two central promises – authenticity and memory preservation – shape both the imagined and enacted intelligences of conversational video AI. While StoryFile markets itself as capturing a ‘whole person’ and preserving their legacy, this ethnography suggests that these achievements are curated, partial, and produced through equally sociocultural and technical practices. Authenticity and legacy in conversational video AI are not captured; they are constructed.

Using the myth/mess framework, this paper highlights how organizations building AI systems, especially ones that model human likeness, must navigate tensions between visionary narratives and messy sociotechnical realities. This approach offers three contributions. First, it foregrounds how ‘intelligence’ in such systems is distributed across human and machine practices, making visible the cultural and technical work embedded in this technology. Second, it provides readers with an adaptable framework to locate similar tensions in other AI projects, identify where values are being embedded (or eroded), and understand the relational nature of these products. Third, it raises critical strategic and ethical questions in an emerging design space: Whose memory gets to be preserved, and who decides? How do we account for the affective labor such products invite or demand from users? And what might happen when reanimation is pursued through other, less carefully bounded, practices?

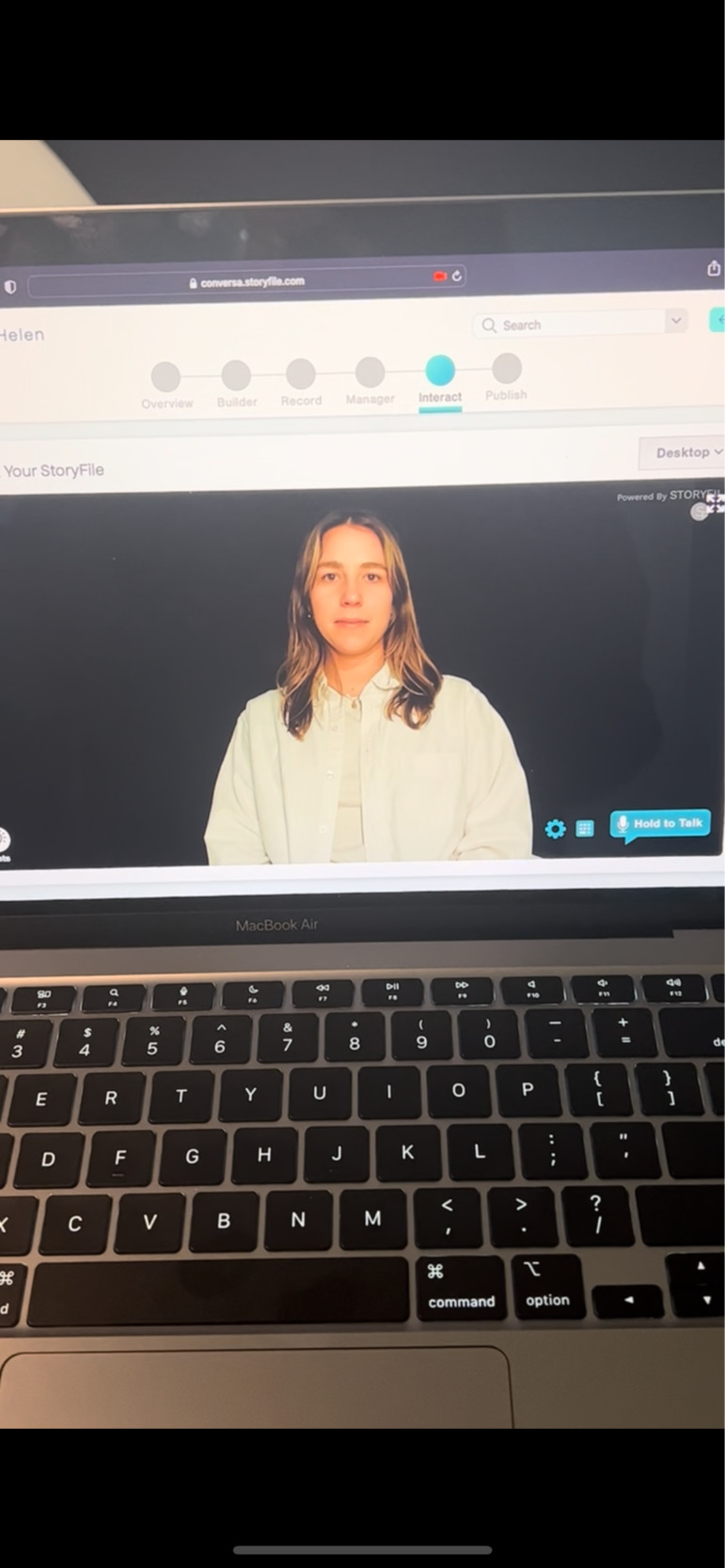

By situating these questions within ethnography, this paper aims to give designers, product leaders, and researchers the analytical and practical tools to better understand both the promises and the limits of AI-mediated human likeness. This research draws on interviews, observations, and months of secondary research carried out in 2023. During fieldwork, I spent a week at StoryFile’s Los Angeles headquarters, where I spoke with thirteen employees across roles – technologists, designers, sales executives, customer service representatives, and studio managers. Because customer access was limited, my focus remained on employees: the makers who frame the company’s promises, negotiate its technical choices, and carry its stories outward.[1] I observed daily practices, product demonstrations, and even created my own StoryFile in the company’s recording studio. These encounters provided a partial yet grounded sense of how employees envision and enact their technology. Borrowing from Nick Seaver (2017), my approach reflects what he calls “scavenging ethnography” – gathering fragments of information wherever access is possible. In this case, interviews and observations were supplemented with analyzing press releases, FAQs, social media posts, and news coverage, which together illustrated the multiple ways StoryFile’s technology is being imagined, described, and contested. Aside from the founders, whose identities are already public, all interview quotes have been anonymized, and participants gave consent for their words to be published.

Context: Collecting our Memories, Collecting Ourselves

The use of AI to replicate human likeness – capturing how humans look, sound, speak, move, and respond – is rapidly moving from speculative fiction into everyday consumer products. In the emerging market of “grief tech,” companies promise to preserve a person’s presence for interaction long after death (Ahuja 2022). Some services offer posthumous reanimation of a loved one through social media posts, photos, video, and other digital archives to compose an approximation of someone. Other companies, like StoryFile, focus on recording a living subject so that later generations can interact with them through the original recording material.

Grief tech services tap into a combination of technological capability and deeply rooted cultural desires. In Euro-American contexts in particular, there is a longstanding value placed on collecting memories as a way to outlast the decay of the body. This desire is bound up with the idea of ‘the self’ as stable, enduring, and preservable: a ‘thing’ that can be stored, curated, and passed on (Lamb 2014). Anthropologists have long noted the ways that collecting objects can serve to stabilize identity and culture (Clifford 1988). Museums, for example, assemble artifacts out of their original contexts to produce a continuous narrative that suggests adequate representation, obscuring the work of curation.

The practice of memory collection works under a similar cultural logic. Hallam and Hockey (2001) describe how, in Euro-American contexts, memories are treated as objects in need of possession. We store photographs, keep memorials, and save letters, believing that by controlling these materials, we control the memories they carry. In this cultural frame, human life is ephemeral and fleeting, while collected memory objects represent permanence. Memory-objects – whether a shoebox of photographs or a video recording – become symbolic anchors that promise to outlast our physical bodies.

Increasingly, both our memory objects and means of collecting them are becoming mediated by digital technology. Despite the constant material, labor, and regeneration required to enable storing memories digitally, digital systems are often imbued with a “logic of permanence” (Chun 2011). Unlike human memory, which often forgets and misrepresents, machines espouse a mechanical logic that guarantees accuracy, retrieval, and storage (Van Dijck 2007). The notion that digital technology can extend human memory has been theorized and contested across anthropology, media, and STS theorists. Theorists like Audrey Samson (2019) and Gordon Bell (2009) propose that digital technology can ‘externalize’ human memories through their ability to record and preserve information into a more stable entity that is the digital. Others like Viktor Mayer-Schonberger (2009) have even suggested that digital technology has facilitated the end of forgetting. Through increasing accessibility, storage capacities, and powerful software, digital memory can transcend individual mortality.

StoryFile sits directly within this cultural logic. The company’s mission to preserve a person (through their stories) resonates with these longstanding yearnings for continuity. Founded in Los Angeles, the company develops conversational video AI that allows people to record answers to thousands of interview questions, which are then tagged, indexed, and made retrievable in real time through a question-and-answer interface. The company’s products span three markets: enterprise solutions for corporate communication and customer service, museum and heritage installations for historical figures, and StoryFile Life, a direct-to-consumer platform for personal use.

By recording someone’s stories in their own words and reanimating them through AI, StoryFile makes it possible to “talk” to them at any time, even after they are gone. Thus, StoryFile offers not just a record, but the promise of a lasting encounter that feels real and enduring. These promises hinge on two central ideals: that an interaction can be ‘authentic,’ and that a life story can be fully preserved. In practice, both of these myths are carefully constructed through technical, curatorial, and performative work. The following sections examine these two myth/mess tensions – authenticity and memory preservation – to examine how StoryFile’s intelligences are imagined, built, and contested in the everyday work and making of this technology.

Tension 1: Manufacturing Authenticity

At StoryFile, the goal to create an authentic interaction is both a central narrative and a design challenge. The company’s tagline, ‘Authentic Interactions,’ reflects this value. In conversations with employees, they often equated authenticity with things like “their real voice,” “ground truth,” or “what people actually said.” They also frequently framed authenticity as an antidote to fears of deepfakes, bots, and virtual agents. As co-founder Stephen Smith put it:

“In a world of bots, droids, and avatars, we are trying to focus on authentic human interaction…the possibility for people to represent themselves through their own stories, in their own words.”

In these ways, authenticity is equated with originality – capturing a person’s real story, their real voice, in their own words. This value is mapped onto how conversations and stories are re-produced through StoryFile: interviews are made up of thousands of pre-written personal questions on various topics, and all recorded answers are unedited and retrievable through AI tagging systems. The company prides itself on ensuring that all content is original and accessible. In this way, an authentic interaction is preserved through the practices that maintain the original, truthful representation of the interview subject.

Regardless of the exact definition of authenticity, its conceptual power establishes a collective framework for what kind of practices are deemed appropriate in the creation of a StoryFile. Yet the everyday work of making one suggests that authenticity is not simply ‘captured’ – rather it is crafted, performed, and negotiated throughout the recording process and post-production.

Crafting Authenticity through Co-Presence

Beyond equating authenticity with original capture, employees also referred to authenticity as the feeling of being in the presence of the subject being represented. As one UX lead recalled, this process requires meticulous crafting:

“We’re always trying to get [customers] to feel like they’re in the presence of the person…to feel like they’ve met them. You want [customers] to suspend their disbelief…because then there’s a lot of knowledge transfer and experience transfer that happens.”

To sustain this suspension of disbelief, interviewees are directed to record a series of stock poses and responses: greetings, goodbyes, listening nods. They are told to look directly into the camera, to wear similar clothing for each session, and to consider maintaining a consistent background.

In post-production, engineers and designers employ different crafts so conversations appear seamless. Automatic speech recognition (ASR) detects an end user’s question, searches the database for a matching ‘variant,’ and cues the most relevant video clip. Digital effects specialists smooth choppy transitions between clips with morphing effects so conversations flow. As one employee explained:

“It’s really hard to take a full-body person and morph them into another clip… you’ve got to create a model that will decide how to move from one clip to the other in a way that looks more natural than a fade… more like when you are actually talking to a person.”

None of these interventions were described in conflict with authenticity. They are considered essential to achieving the company’s tagline of an ‘authentic interaction’ – a conversation that feels natural, fluid, and grounded in the ‘real’ person.

Negotiating the Boundaries of the Authentic

The line between what interventions counted as authentic and what didn’t was not universally shared across all employees and practices.

When StoryFile recorded a Holocaust Survivor in both English and German, some discussion followed about using lip-synthesis technology to generate a Spanish version – translating the transcript and altering the lip movements to match. This idea promised wider accessibility, but it also risked being criticized as a deepfake.

Employees also discussed whether lip synthesis might be integrated in more limited ways – for instance, routine filler content like the user saying hello, goodbye, or default responses – so that interview sessions could focus on more meaningful storytelling. Yet even this suggestion raised questions. As one employee reflected:

“We could synthesize you saying yes or no, so we can focus on the more important stuff like getting you to tell me how you met your dad or mom…but once you start getting into synthetic videos, how can you label what’s authentic and what’s not?”

In these moments of hypotheticals on how far to take certain interventions, authenticity shifts from a fixed quality to a boundary negotiated and contested in real time: is it about the content (what the person actually said), the form (their real voice and face), or the experience (feeling as though they are present)?

Embodied Encounters with Authenticity

My own experience recording a StoryFile highlighted how authenticity is felt and negotiated in practice. After testing out the experience with an employee one afternoon, I reflected on how the process felt both intimate and performative: repeating greetings, maintaining eye contact with the camera, and rehearsing stories in a way that felt like curating a future version of myself. Later, upon interacting with my own StoryFile, I experienced moments of recognition – hearing my own voice, seeing my own gestures – that felt ‘true’ to how I might come across in a conversation. Yet in other moments, like listening to an answer that felt somewhat forced or awkward, I felt overwhelmed by the performativeness of the experience.

While my research did not extend to firsthand subjects and their experiences of being reanimated, public accounts of encounters with StoryFile suggest this tension exists. In a New York Times Magazine feature, a son describes interacting with his father’s StoryFile that was recorded upon finding out about his terminal illness (Dominus 2025). In some moments, the son feels unarmed and deeply affected by his father’s answers; other moments, when the StoryFile provides default responses like “I don’t have an answer for that right now,” the illusion of conversation is broken – a reminder that he can only interact with this version of his father.

Taken together, example encounters like these imply how authenticity in this product is not inherent but contingent: continuously crafted by the subject’s performance, mediated by technical systems, and ultimately affirmed or unsettled in embodied and affected encounters with users.

Authenticity as a Distributed Achievement

Seen through the myth/mess lens, authenticity emerges from a distributed network of human and machine work: the subject’s performance in front of a camera; the technical systems that match and play clips; the design interventions that smooth transitions; the corporate narratives that surround the product. The final experience is a co-produced outcome of all of these, not simply the capture of the original recording.

What StoryFile considers authenticity is therefore less about the absence of mediation than about a carefully orchestrated and distributed presence – one that persuades the viewer to feel they are meeting the ‘real’ person, even as that encounter is manufactured through multiple layers of performance and technical mediation.

Tension 2: Memory + Legacy Preservation

A second key narrative observed at StoryFile was the belief that preserving one’s memories through a StoryFile is a means of preserving their life and legacy. As one employee put it, “mortality is my greatest enemy.” Other employees reference moments of great success when they were able to record someone before they pass; in contrast, missed opportunities when they weren’t able to capture someone before they died. Such rhetoric reinforces the need to work against the clock to capture one’s memories – otherwise, they will be lost with the person who passes. This belief in preserving through StoryFile was most clearly articulated in the launch of StoryFile Life, the company’s direct-to-consumer platform, through the participation of its inaugural customer, William Shatner.

The founder framed Shatner’s StoryFile as:

“Generations in the future will be able to have a conversation with him. Not an avatar, not a deep fake, but with the real William Shatner answering their questions about his life and work. This changes the trajectory of the future – of how we experience life today, and how we share those lessons and stories for generations to come.”

Here, the StoryFile is positioned as a vessel for “the real” person – able to transmit knowledge, personality, and experience across generations. This capability is technologically sustained through the company’s retrieval system tags and indexes every answer, creating the sense that nothing is lost. Unlike oral history archives, StoryFile emphasizes total inclusion over curation.

Much like the promise of authenticity, the promise of permanence becomes complicated when exploring how this value is designed, negotiated, and distributed in practice. If StoryFile claims to preserve a person’s memory and their legacy, what exactly is being preserved – and what is left out?

Time Capsule Effect

A key part of StoryFile’s preservation process is through interviewing and recording subjects. In the studio, interviewers rely on a repository of thousands of questions about a person’s life, legacy, memories, or any subject they want to create themselves. On the app, a customer can choose from an equally large repository of questions. All video material becomes the basis for a subject’s reanimation.

The affordances of interview stories create a particular, partial, and temporal effect. Once a StoryFile is complete, it is fixed at the moment of capture. It only reflects the stories the subject chose to tell, in the way they told them, at a particular time. It does not change, forget, or adapt in light of new relationships or contexts. Human memory, by contrast, is much more permeable: shaped and reshaped through retelling, altered by forgetting, and sustained in relation to others (Van Dijck 2007). StoryFile’s leadership acknowledges this difference. When I first sat down with one of their technologists, they described StoryFiles as “more of a time capsule” than a fixed person. While generative AI models could be layered in to produce new responses inspired by the source material, the company at the time was not pursuing this path to fall in line with their values of authenticity. They explained:

“People are not fixed, we are not this concrete thing…as long as we are alive, we’re constantly evolving and constantly changing. We are not a time capsule…so, for the AI to capture that is tricky, or it’s basically saying that it will continually evolve after you’re alive and it’s now become this other entity that has continued to evolve in a completely different way.”

The choice to preserve a “true” version of the person therefore fixes that version in time – delivering not a living individual but a curated record of one. The system can only recall what has been encoded, producing a memory that is transactional and repetitive rather than adaptive and relational.

Reanimating the Absent: The Case of Sam Walton

The question of preservation becomes even more complex in posthumous projects like StoryFile’s re-creation of Walmart’s founder Sam Walton. When StoryFile took on this project, they worked with his family, estate, and archivists to review 12,000 hours of video footage, choose what age he should resemble, and comb through archives of speeches, writing, and personal documents to curate a ‘script’ for Walton’s responses.

They then hired an actor with Walton’s likeness to rehearse and perform the script in StoryFile’s studio, mimicking his mannerisms and accent. In post-production, engineers overlaid facial features and voice characteristics drawn from historical footage of Walton, creating a seamless visual and auditory match.

The result was deeply affecting for viewers. As one Walmart Heritage Group director recalled: “people who knew Sam get the quivering chin. They get misty-eyed because of how realistic it is” (Zeitchick 2022).

‘Mister Sam’ was constructed through assemblages of mechanical capacities and human decisions. For example, his script was crafted through what Walton said, did, and thought. People made decisions about what to leave out and what to keep, which partially established what kind of responses and behaviors Sam would emulate. The script then gets ‘performed’ by StoryFile’s AI. Sam’s conversational abilities are dependent on the scripting, training, and programming of StoryFile’s AI mechanism. In the final product, Mister Sam is an assemblage of human decisions, technical processes, and archival fragments. His wholeness, realness, and preservation are incumbent upon these distributed practices.

Legacy as a Constructed Intelligence

In both living and posthumous projects, StoryFile’s promise of legacy preservation depends on constant sociocultural and technical labor. What is delivered is not the person in full, but a version – curated, fixed in time, and shaped by the processes of its making.

While this version may satisfy a personal or cultural desire for permanence, such mediation introduces strategic and ethical implications – the flattening of identity, and losing the adaptive, embodied, and relational qualities that define human remembering. As with authenticity, the preservation of legacy is less a matter of capturing than of constructing. Moreover, it reveals the emergent ways in which what is promised as technological inevitability is instead a matter of curated choices, design decisions, negotiated values, and technical affordances.

Designing in the Space Between Myth and Mess

This research offers a lens for organizations building and using AI systems that emulate human likeness. By tracing how authenticity and memory preservation are imagined, designed, and constructed at StoryFile, this analysis suggests how these products are not just technical systems – the intelligences of these systems are multiple and distributed. At StoryFile, authenticity and memory preservation are not produced by a single algorithm or individual. They emerge from the interplay of human storytelling, performance, design choices, technical affordances, and corporate narratives. Designing human likeness systems with AI therefore requires acknowledging that cultural and technical work co-produce the experience – and that both shape what is preserved, shown, or left out.

For EPIC practitioners, product leaders, and designers, the myth/mess framework is not simply a critique – it is a design tool for navigating the space between visionary narratives and the complexities of implementation. Identifying these tensions help surface where values are embedded, where they erode, and how they may be contested in practice. In turn, they can guide decisions about principles, boundaries, and safeguards for similar products that emulate human likeness and mediate memory, mourning, and the embodied practices through which we form identity, remember, and relate to others.

Below are five considerations drawn from the myth/mess tensions of authenticity and memory preservation surfaced in this research:

1. Define and Communicate the Bounds of Likeness to Users

Replicating human likeness – how people look, sound, speak, move, and respond – is not just captured. It is constructed through form (bodily representation of face, voice, and gestures), content (what is said and how), and experience (the perception of having a real conversation).

At StoryFile, decisions such as avoiding lip-synthesis for translations were framed as authenticity choices: the form (mouth movements, voice) should remain tied to the original content (human story), even if it means the experience (perception of an authentic interaction) may be limited by language constraints. Moreover, the reliance on recorded stories and video constructed a certain kind of likeness, encounter, and means of remembering. Other grief tech companies, such as You, Only Virtual, take a broader approach, pulling in content like WhatsApp text threads, voice notes, and social media posts to assemble posthumous likeness. Both approaches create boundaries for what likeness is crafted and what kind of encounter is possible – but those boundaries are culturally contingent and shift over time. Making them explicit to users builds trust and reduces the risk of later disillusionment.

2. Acknowledge and Account for Technical Mediation

As described above, technical mediation shapes what kind of likeness and interaction is possible, and these affordances can result in deeply moving, emotional, and even unsettling encounters. At the time of my research, StoryFile chose not to integrate generative AI that could produce novel responses beyond what had been recorded and tagged. This preserved a “fixed” version of the person but limited adaptability. By contrast, some grief tech products deliberately use generative tools to allow the likeness to “learn” and evolve based on the original set of data used. Such a choice fundamentally alters what is being preserved.

Instead of downplaying these decisions, companies can surface them in user interfaces, onboarding flows, or marketing language, allowing users to understand what kind of likeness and interaction they are signing up for. Such transparency is especially important in contexts of grief, where expectations are emotionally charged.

3. Resource for Emotional Labor

Even outside of grief tech, reanimating people for memory and legacy preservation creates emotional labor – for both users and those supporting them. At StoryFile, the work of supporting customers through these complex moments was distributed across customer service teams, interview guides, and online resources. Organizations building similar products should plan for this labor from the outset: training teams to respond to grief-related concerns, integrating resources for emotional support, and designing interactions that guide users through emotionally sensitive moments. The emotional labor that this product produces should be anticipated, not responded to.

4. Plan for Endurance, and Governance

When memories are offloaded into privatized systems, companies take on the responsibility of personal archives. Unlike museums or public archives, there are fewer governance models for stewarding these assets across decades.

Questions of endurance – like who will maintain archives if the company folds, or how will likenesses be handled when families disagree – are not purely legal; they are design questions. Addressing them requires planning for safeguarding, portability, and oversight, ideally through participatory processes that involve users and those who want to engage with their StoryFiles.

5. Consider Forgetting and Multiplicity

Freezing memories into a fixed, retrievable form changes the nature of remembering. Human memory is relational and adaptive; forgetting can be as vital to grieving as remembering. Systems that preserve a singular version risk flattening identity and diminishing the collective nature of remembering others.

Designers in this space can consider how to allow for multiplicity – the coexistence of different versions, contradictions, and omissions – and how to make space for forgetting as part of the product experience.

Applying Myth/Mess beyond Grief Tech

While the above considerations emerge from the particular case at StoryFile, the underlying dynamics are not unique to grief tech. These lessons extend to a wider range of AI likeness products: celebrity “digital twins,” customer service avatars, training simulators, and museum interactives. Across these domains, the myth/mess framework can guide teams to locate where aspirational narratives meet operational realities by tracing the compromises and workarounds that shape the final product. It encourages practitioners to map the sociocultural and technical labor that produces the experience, to identify where values are embedded or lost in translation, and to account for frictions between user expectations and system limitations. Most importantly, the framework makes visible the negotiations – technical, cultural, and organizational – that shape what is preserved, shown, or left out in the making of products that emulate human likeness. In practice, this means designing not only for the capabilities of the technology, but also for the emotional, ethical, and cultural implications of the encounter.

Conclusion

This paper has demonstrated how the myth/mess framework can be used to unpack design and strategic implications of AI systems that emulate human likeness, revealing the ways in which authenticity and memory preservation are not inherent properties of a system but constructed outcomes of distributed human and machine labor. In tracing the production of these values at StoryFile, this paper suggests that the “intelligence” of conversational video AI resides as much in the embodied practices, design interventions, and corporate narratives that shape it as in its technical affordances.

By situating these systems within an anthropological view of memory and collection, this analysis has highlighted the deeply cultural work of translating human experiences like storytelling, remembering, and representation into a digitally mediated form. Authenticity is not simply the absence of mediation; it is orchestrated through interview performance, technical practices, and design choices about what counts as authentic. Likewise, the myth of memory and legacy preservation is less an exhaustive archive than a curated record, fixed at a moment in time and shaped by the priorities and constraints of its makers.

For organizations working in this space, the myth/mess framework offers more than critique: it provides an analytical tool for identifying where product narratives meet messy operational realities, where values are embedded or eroded, and how frictions between promise and practice can be navigated in ways that maintain user trust. Designing in the space between myth and mess means making these negotiations visible, planning for governance and endurance of personal archives, and designing for the emotional labor that accompanies these interactions.

The implications extend beyond “grief tech.” Whether in celebrity digital twins or customer service agents, replicating human likeness demands attention to the relational, cultural, and ethical contexts in which it is embedded. In these domains, as in StoryFile’s work, the question is not whether AI can replicate a person, but how – and to what ends – those replications are constructed. Recognizing the distributed nature of these technical systems allows us to design not just for performance, but for the human practices of remembering, mourning, and relating that they inevitably reshape.

About the Author

Helen Robertson is a design strategist and user researcher with a background in digital anthropology. Before earning her master’s at University College London, she led user-driven innovation projects for clients including Olympus Medical Systems, NHS, and USAID. She is now a Senior UX Researcher at Motorola Solutions, where she applies mixed methods to shape products and services in the public safety ecosystem.

The focus on employees also avoided risks of ethical harm introduced by interacting with end users that may be undergoing bereavement. Participation in this research was entirely voluntary and all interview participants signed informed consent forms before participating. All collected data was stored securely and only maintained for the purposes of this project.